mirror of

https://github.com/invoke-ai/InvokeAI.git

synced 2026-01-18 01:37:56 -05:00

Compare commits

7 Commits

psychedeli

...

v3.2.0rc2

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c439e9681e | ||

|

|

369963791e | ||

|

|

0d94bed9f8 | ||

|

|

cec8ad57a5 | ||

|

|

003c2c28c9 | ||

|

|

661b3056ed | ||

|

|

20f7e448c3 |

@@ -167,23 +167,6 @@ and so you'll have access to the same python environment as the InvokeAI app.

|

||||

|

||||

This is _super_ handy.

|

||||

|

||||

#### Enabling Type-Checking with Pylance

|

||||

|

||||

We use python's typing system in InvokeAI. PR reviews will include checking that types are present and correct. We don't enforce types with `mypy` at this time, but that is on the horizon.

|

||||

|

||||

Using a code analysis tool to automatically type check your code (and types) is very important when writing with types. These tools provide immediate feedback in your editor when types are incorrect, and following their suggestions lead to fewer runtime bugs.

|

||||

|

||||

Pylance, installed at the beginning of this guide, is the de-facto python LSP (language server protocol). It provides type checking in the editor (among many other features). Once installed, you do need to enable type checking manually:

|

||||

|

||||

- Open a python file

|

||||

- Look along the status bar in VSCode for `{ } Python`

|

||||

- Click the `{ }`

|

||||

- Turn type checking on - basic is fine

|

||||

|

||||

You'll now see red squiggly lines where type issues are detected. Hover your cursor over the indicated symbols to see what's wrong.

|

||||

|

||||

In 99% of cases when the type checker says there is a problem, there really is a problem, and you should take some time to understand and resolve what it is pointing out.

|

||||

|

||||

#### Debugging configs with `launch.json`

|

||||

|

||||

Debugging configs are managed in a `launch.json` file. Like most VSCode configs,

|

||||

@@ -225,14 +208,6 @@ Now we can create the InvokeAI debugging configs:

|

||||

"program": "scripts/invokeai-cli.py",

|

||||

"justMyCode": true

|

||||

},

|

||||

{

|

||||

"type": "chrome",

|

||||

"request": "launch",

|

||||

"name": "InvokeAI UI",

|

||||

// You have to run the UI with `yarn dev` for this to work

|

||||

"url": "http://localhost:5173",

|

||||

"webRoot": "${workspaceFolder}/invokeai/frontend/web"

|

||||

},

|

||||

{

|

||||

// Run tests

|

||||

"name": "InvokeAI Test",

|

||||

@@ -268,8 +243,7 @@ Now we can create the InvokeAI debugging configs:

|

||||

|

||||

You'll see these configs in the debugging configs drop down. Running them will

|

||||

start InvokeAI with attached debugger, in the correct environment, and work just

|

||||

like the normal app, though the UI debugger requires you to run the UI in dev

|

||||

mode. See the [frontend guide](contribution_guides/contributingToFrontend.md) for setting that up.

|

||||

like the normal app.

|

||||

|

||||

Enjoy debugging InvokeAI with ease (not that we have any bugs of course).

|

||||

|

||||

|

||||

@@ -38,9 +38,9 @@ There are two paths to making a development contribution:

|

||||

|

||||

If you need help, you can ask questions in the [#dev-chat](https://discord.com/channels/1020123559063990373/1049495067846524939) channel of the Discord.

|

||||

|

||||

For frontend related work, **@psychedelicious** is the best person to reach out to.

|

||||

For frontend related work, **@pyschedelicious** is the best person to reach out to.

|

||||

|

||||

For backend related work, please reach out to **@blessedcoolant**, **@lstein**, **@StAlKeR7779** or **@psychedelicious**.

|

||||

For backend related work, please reach out to **@blessedcoolant**, **@lstein**, **@StAlKeR7779** or **@pyschedelicious**.

|

||||

|

||||

|

||||

## **What does the Code of Conduct mean for me?**

|

||||

|

||||

@@ -10,4 +10,4 @@ When updating or creating documentation, please keep in mind InvokeAI is a tool

|

||||

|

||||

## Help & Questions

|

||||

|

||||

Please ping @imic or @hipsterusername in the [Discord](https://discord.com/channels/1020123559063990373/1049495067846524939) if you have any questions.

|

||||

Please ping @imic1 or @hipsterusername in the [Discord](https://discord.com/channels/1020123559063990373/1049495067846524939) if you have any questions.

|

||||

@@ -1,11 +1,13 @@

|

||||

---

|

||||

title: Control Adapters

|

||||

title: ControlNet

|

||||

---

|

||||

|

||||

# :material-loupe: Control Adapters

|

||||

# :material-loupe: ControlNet

|

||||

|

||||

## ControlNet

|

||||

|

||||

ControlNet

|

||||

|

||||

ControlNet is a powerful set of features developed by the open-source

|

||||

community (notably, Stanford researcher

|

||||

[**@ilyasviel**](https://github.com/lllyasviel)) that allows you to

|

||||

@@ -18,7 +20,7 @@ towards generating images that better fit your desired style or

|

||||

outcome.

|

||||

|

||||

|

||||

#### How it works

|

||||

### How it works

|

||||

|

||||

ControlNet works by analyzing an input image, pre-processing that

|

||||

image to identify relevant information that can be interpreted by each

|

||||

@@ -28,7 +30,7 @@ composition, or other aspects of the image to better achieve a

|

||||

specific result.

|

||||

|

||||

|

||||

#### Models

|

||||

### Models

|

||||

|

||||

InvokeAI provides access to a series of ControlNet models that provide

|

||||

different effects or styles in your generated images. Currently

|

||||

@@ -94,8 +96,6 @@ A model that generates normal maps from input images, allowing for more realisti

|

||||

**Image Segmentation**:

|

||||

A model that divides input images into segments or regions, each of which corresponds to a different object or part of the image. (More details coming soon)

|

||||

|

||||

**QR Code Monster**:

|

||||

A model that helps generate creative QR codes that still scan. Can also be used to create images with text, logos or shapes within them.

|

||||

|

||||

**Openpose**:

|

||||

The OpenPose control model allows for the identification of the general pose of a character by pre-processing an existing image with a clear human structure. With advanced options, Openpose can also detect the face or hands in the image.

|

||||

@@ -120,7 +120,7 @@ With Pix2Pix, you can input an image into the controlnet, and then "instruct" th

|

||||

Each of these models can be adjusted and combined with other ControlNet models to achieve different results, giving you even more control over your image generation process.

|

||||

|

||||

|

||||

### Using ControlNet

|

||||

## Using ControlNet

|

||||

|

||||

To use ControlNet, you can simply select the desired model and adjust both the ControlNet and Pre-processor settings to achieve the desired result. You can also use multiple ControlNet models at the same time, allowing you to achieve even more complex effects or styles in your generated images.

|

||||

|

||||

@@ -132,31 +132,3 @@ Weight - Strength of the Controlnet model applied to the generation for the sect

|

||||

Start/End - 0 represents the start of the generation, 1 represents the end. The Start/end setting controls what steps during the generation process have the ControlNet applied.

|

||||

|

||||

Additionally, each ControlNet section can be expanded in order to manipulate settings for the image pre-processor that adjusts your uploaded image before using it in when you Invoke.

|

||||

|

||||

|

||||

## IP-Adapter

|

||||

|

||||

[IP-Adapter](https://ip-adapter.github.io) is a tooling that allows for image prompt capabilities with text-to-image diffusion models. IP-Adapter works by analyzing the given image prompt to extract features, then passing those features to the UNet along with any other conditioning provided.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

#### Installation

|

||||

There are several ways to install IP-Adapter models with an existing InvokeAI installation:

|

||||

|

||||

1. Through the command line interface launched from the invoke.sh / invoke.bat scripts, option [5] to download models.

|

||||

2. Through the Model Manager UI with models from the *Tools* section of [www.models.invoke.ai](www.models.invoke.ai). To do this, copy the repo ID from the desired model page, and paste it in the Add Model field of the model manager. **Note** Both the IP-Adapter and the Image Encoder must be installed for IP-Adapter to work. For example, the [SD 1.5 IP-Adapter](https://models.invoke.ai/InvokeAI/ip_adapter_plus_sd15) and [SD1.5 Image Encoder](https://models.invoke.ai/InvokeAI/ip_adapter_sd_image_encoder) must be installed to use IP-Adapter with SD1.5 based models.

|

||||

3. **Advanced -- Not recommended ** Manually downloading the IP-Adapter and Image Encoder files - Image Encoder folders shouid be placed in the `models\any\clip_vision` folders. IP Adapter Model folders should be placed in the relevant `ip-adapter` folder of relevant base model folder of Invoke root directory. For example, for the SDXL IP-Adapter, files should be added to the `model/sdxl/ip_adapter/` folder.

|

||||

|

||||

#### Using IP-Adapter

|

||||

|

||||

IP-Adapter can be used by navigating to the *Control Adapters* options and enabling IP-Adapter.

|

||||

|

||||

IP-Adapter requires an image to be used as the Image Prompt. It can also be used in conjunction with text prompts, Image-to-Image, Inpainting, Outpainting, ControlNets and LoRAs.

|

||||

|

||||

|

||||

Each IP-Adapter has two settings that are applied to the IP-Adapter:

|

||||

|

||||

* Weight - Strength of the IP-Adapter model applied to the generation for the section, defined by start/end

|

||||

* Start/End - 0 represents the start of the generation, 1 represents the end. The Start/end setting controls what steps during the generation process have the IP-Adapter applied.

|

||||

|

||||

@@ -296,18 +296,8 @@ code for InvokeAI. For this to work, you will need to install the

|

||||

on your system, please see the [Git Installation

|

||||

Guide](https://github.com/git-guides/install-git)

|

||||

|

||||

You will also need to install the [frontend development toolchain](https://github.com/invoke-ai/InvokeAI/blob/main/docs/contributing/contribution_guides/contributingToFrontend.md).

|

||||

|

||||

If you have a "normal" installation, you should create a totally separate virtual environment for the git-based installation, else the two may interfere.

|

||||

|

||||

> **Why do I need the frontend toolchain**?

|

||||

>

|

||||

> The InvokeAI project uses trunk-based development. That means our `main` branch is the development branch, and releases are tags on that branch. Because development is very active, we don't keep an updated build of the UI in `main` - we only build it for production releases.

|

||||

>

|

||||

> That means that between releases, to have a functioning application when running directly from the repo, you will need to run the UI in dev mode or build it regularly (any time the UI code changes).

|

||||

|

||||

1. Create a fork of the InvokeAI repository through the GitHub UI or [this link](https://github.com/invoke-ai/InvokeAI/fork)

|

||||

2. From the command line, run this command:

|

||||

1. From the command line, run this command:

|

||||

```bash

|

||||

git clone https://github.com/<your_github_username>/InvokeAI.git

|

||||

```

|

||||

@@ -315,10 +305,10 @@ If you have a "normal" installation, you should create a totally separate virtua

|

||||

This will create a directory named `InvokeAI` and populate it with the

|

||||

full source code from your fork of the InvokeAI repository.

|

||||

|

||||

3. Activate the InvokeAI virtual environment as per step (4) of the manual

|

||||

2. Activate the InvokeAI virtual environment as per step (4) of the manual

|

||||

installation protocol (important!)

|

||||

|

||||

4. Enter the InvokeAI repository directory and run one of these

|

||||

3. Enter the InvokeAI repository directory and run one of these

|

||||

commands, based on your GPU:

|

||||

|

||||

=== "CUDA (NVidia)"

|

||||

@@ -344,15 +334,11 @@ installation protocol (important!)

|

||||

Be sure to pass `-e` (for an editable install) and don't forget the

|

||||

dot ("."). It is part of the command.

|

||||

|

||||

5. Install the [frontend toolchain](https://github.com/invoke-ai/InvokeAI/blob/main/docs/contributing/contribution_guides/contributingToFrontend.md) and do a production build of the UI as described.

|

||||

|

||||

6. You can now run `invokeai` and its related commands. The code will be

|

||||

You can now run `invokeai` and its related commands. The code will be

|

||||

read from the repository, so that you can edit the .py source files

|

||||

and watch the code's behavior change.

|

||||

|

||||

When you pull in new changes to the repo, be sure to re-build the UI.

|

||||

|

||||

7. If you wish to contribute to the InvokeAI project, you are

|

||||

4. If you wish to contribute to the InvokeAI project, you are

|

||||

encouraged to establish a GitHub account and "fork"

|

||||

https://github.com/invoke-ai/InvokeAI into your own copy of the

|

||||

repository. You can then use GitHub functions to create and submit

|

||||

|

||||

@@ -171,16 +171,3 @@ subfolders and organize them as you wish.

|

||||

|

||||

The location of the autoimport directories are controlled by settings

|

||||

in `invokeai.yaml`. See [Configuration](../features/CONFIGURATION.md).

|

||||

|

||||

### Installing models that live in HuggingFace subfolders

|

||||

|

||||

On rare occasions you may need to install a diffusers-style model that

|

||||

lives in a subfolder of a HuggingFace repo id. In this event, simply

|

||||

add ":_subfolder-name_" to the end of the repo id. For example, if the

|

||||

repo id is "monster-labs/control_v1p_sd15_qrcode_monster" and the model

|

||||

you wish to fetch lives in a subfolder named "v2", then the repo id to

|

||||

pass to the various model installers should be

|

||||

|

||||

```

|

||||

monster-labs/control_v1p_sd15_qrcode_monster:v2

|

||||

```

|

||||

|

||||

@@ -4,12 +4,12 @@ The workflow editor is a blank canvas allowing for the use of individual functio

|

||||

|

||||

If you're not familiar with Diffusion, take a look at our [Diffusion Overview.](../help/diffusion.md) Understanding how diffusion works will enable you to more easily use the Workflow Editor and build workflows to suit your needs.

|

||||

|

||||

## Features

|

||||

## UI Features

|

||||

|

||||

### Linear View

|

||||

The Workflow Editor allows you to create a UI for your workflow, to make it easier to iterate on your generations.

|

||||

|

||||

To add an input to the Linear UI, right click on the input label and select "Add to Linear View".

|

||||

To add an input to the Linear UI, right click on the input and select "Add to Linear View".

|

||||

|

||||

The Linear UI View will also be part of the saved workflow, allowing you share workflows and enable other to use them, regardless of complexity.

|

||||

|

||||

@@ -25,10 +25,6 @@ Any node or input field can be renamed in the workflow editor. If the input fiel

|

||||

* Backspace/Delete to delete a node

|

||||

* Shift+Click to drag and select multiple nodes

|

||||

|

||||

### Node Caching

|

||||

|

||||

Nodes have a "Use Cache" option in their footer. This allows for performance improvements by using the previously cached values during the workflow processing.

|

||||

|

||||

|

||||

## Important Concepts

|

||||

|

||||

|

||||

@@ -8,7 +8,19 @@ To download a node, simply download the `.py` node file from the link and add it

|

||||

|

||||

To use a community workflow, download the the `.json` node graph file and load it into Invoke AI via the **Load Workflow** button in the Workflow Editor.

|

||||

|

||||

--------------------------------

|

||||

## Community Nodes

|

||||

|

||||

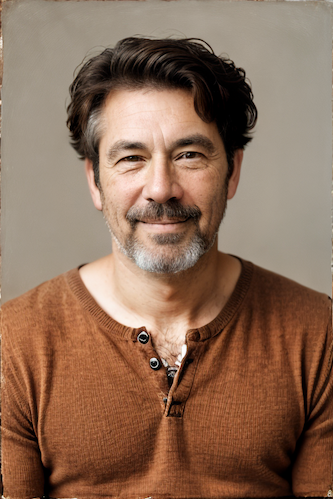

### FaceTools

|

||||

|

||||

**Description:** FaceTools is a collection of nodes created to manipulate faces as you would in Unified Canvas. It includes FaceMask, FaceOff, and FacePlace. FaceMask autodetects a face in the image using MediaPipe and creates a mask from it. FaceOff similarly detects a face, then takes the face off of the image by adding a square bounding box around it and cropping/scaling it. FacePlace puts the bounded face image from FaceOff back onto the original image. Using these nodes with other inpainting node(s), you can put new faces on existing things, put new things around existing faces, and work closer with a face as a bounded image. Additionally, you can supply X and Y offset values to scale/change the shape of the mask for finer control on FaceMask and FaceOff. See GitHub repository below for usage examples.

|

||||

|

||||

**Node Link:** https://github.com/ymgenesis/FaceTools/

|

||||

|

||||

**FaceMask Output Examples**

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Ideal Size

|

||||

@@ -31,52 +43,6 @@ To use a community workflow, download the the `.json` node graph file and load i

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/image-picker-node

|

||||

|

||||

--------------------------------

|

||||

### Thresholding

|

||||

|

||||

**Description:** This node generates masks for highlights, midtones, and shadows given an input image. You can optionally specify a blur for the lookup table used in making those masks from the source image.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/thresholding-node

|

||||

|

||||

**Examples**

|

||||

|

||||

Input:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

Highlights/Midtones/Shadows:

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/727021c1-36ff-4ec8-90c8-105e00de986d" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0b721bfc-f051-404e-b905-2f16b824ddfe" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/04c1297f-1c88-42b6-a7df-dd090b976286" style="width: 30%" />

|

||||

|

||||

Highlights/Midtones/Shadows (with LUT blur enabled):

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/19aa718a-70c1-4668-8169-d68f4bd13771" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0a440e43-697f-4d17-82ee-f287467df0a5" style="width: 30%" />

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/34005131/0701fd0f-2ca7-4fe2-8613-2b52547bafce" style="width: 30%" />

|

||||

|

||||

--------------------------------

|

||||

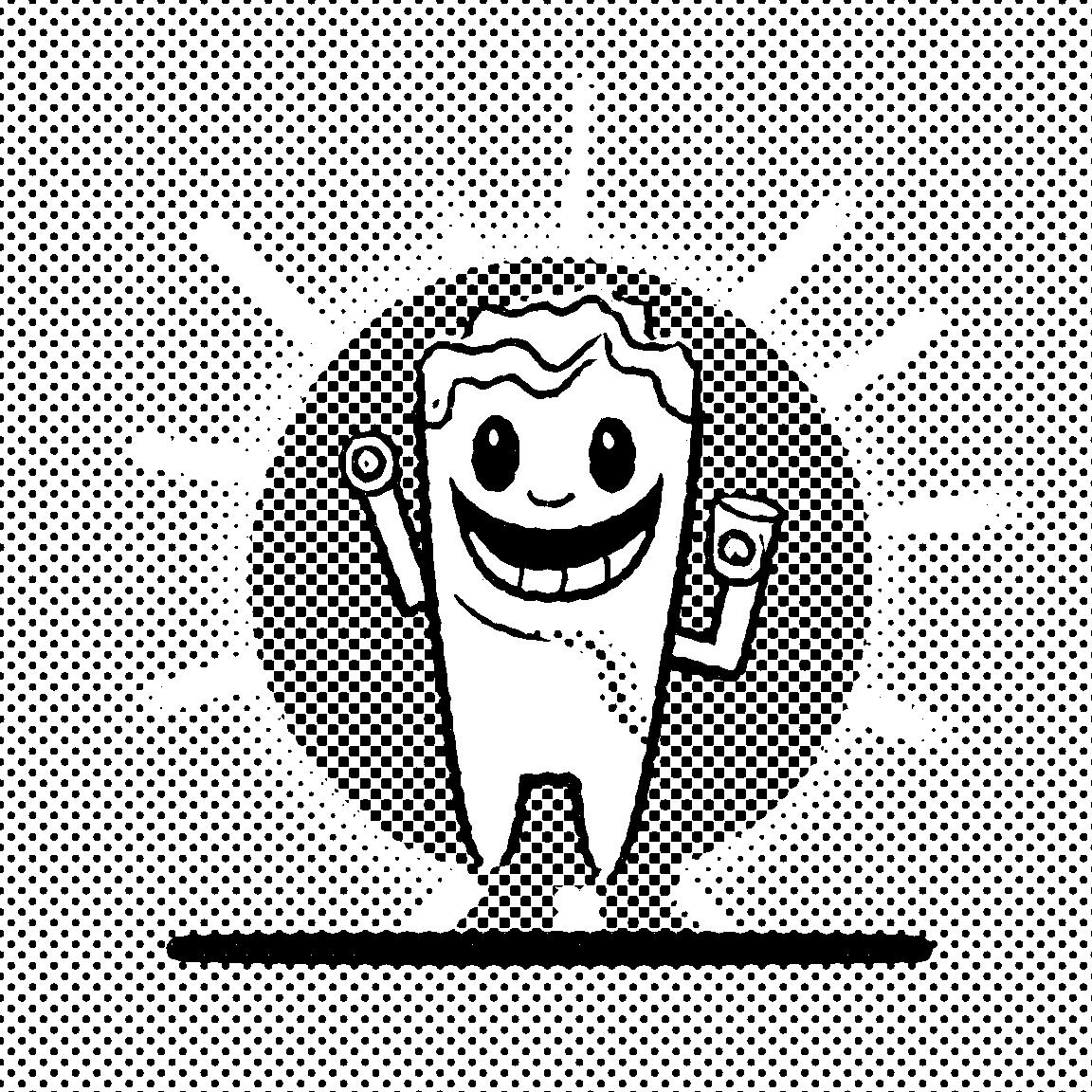

### Halftone

|

||||

|

||||

**Description**: Halftone converts the source image to grayscale and then performs halftoning. CMYK Halftone converts the image to CMYK and applies a per-channel halftoning to make the source image look like a magazine or newspaper. For both nodes, you can specify angles and halftone dot spacing.

|

||||

|

||||

**Node Link:** https://github.com/JPPhoto/halftone-node

|

||||

|

||||

**Example**

|

||||

|

||||

Input:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

Halftone Output:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

CMYK Halftone Output:

|

||||

|

||||

{: style="height:512px;width:512px"}

|

||||

|

||||

--------------------------------

|

||||

### Retroize

|

||||

|

||||

@@ -111,7 +77,7 @@ Generated Prompt: An enchanted weapon will be usable by any character regardless

|

||||

**Example Node Graph:** https://github.com/helix4u/load_video_frame/blob/main/Example_Workflow.json

|

||||

|

||||

**Output Example:**

|

||||

|

||||

=======

|

||||

|

||||

[Full mp4 of Example Output test.mp4](https://github.com/helix4u/load_video_frame/blob/main/test.mp4)

|

||||

|

||||

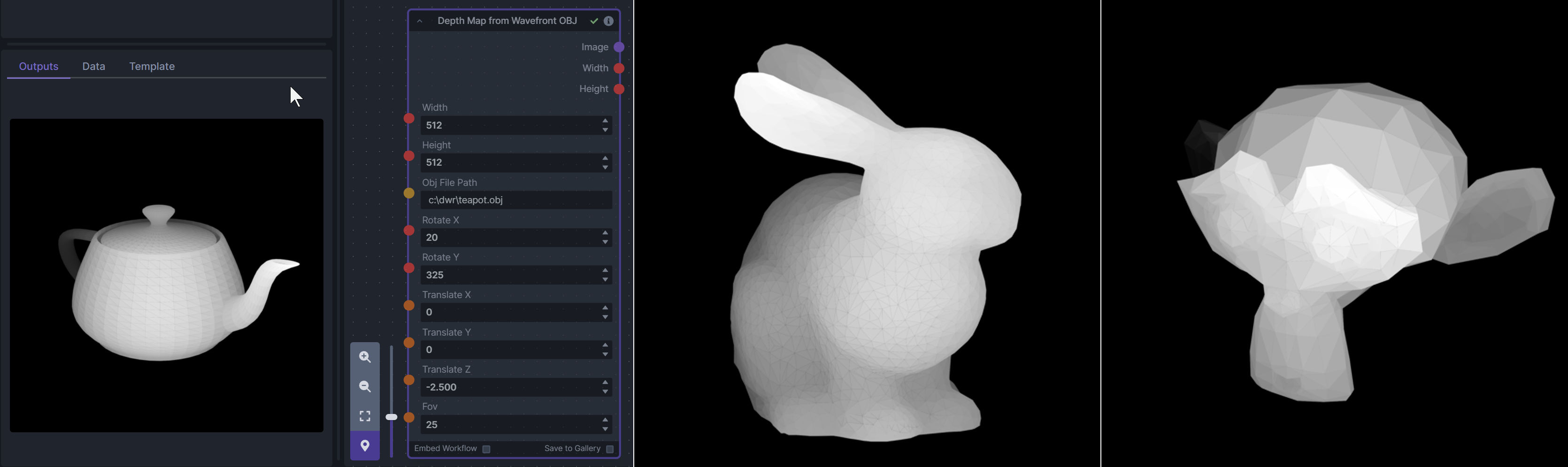

@@ -155,6 +121,18 @@ To be imported, an .obj must use triangulated meshes, so make sure to enable tha

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

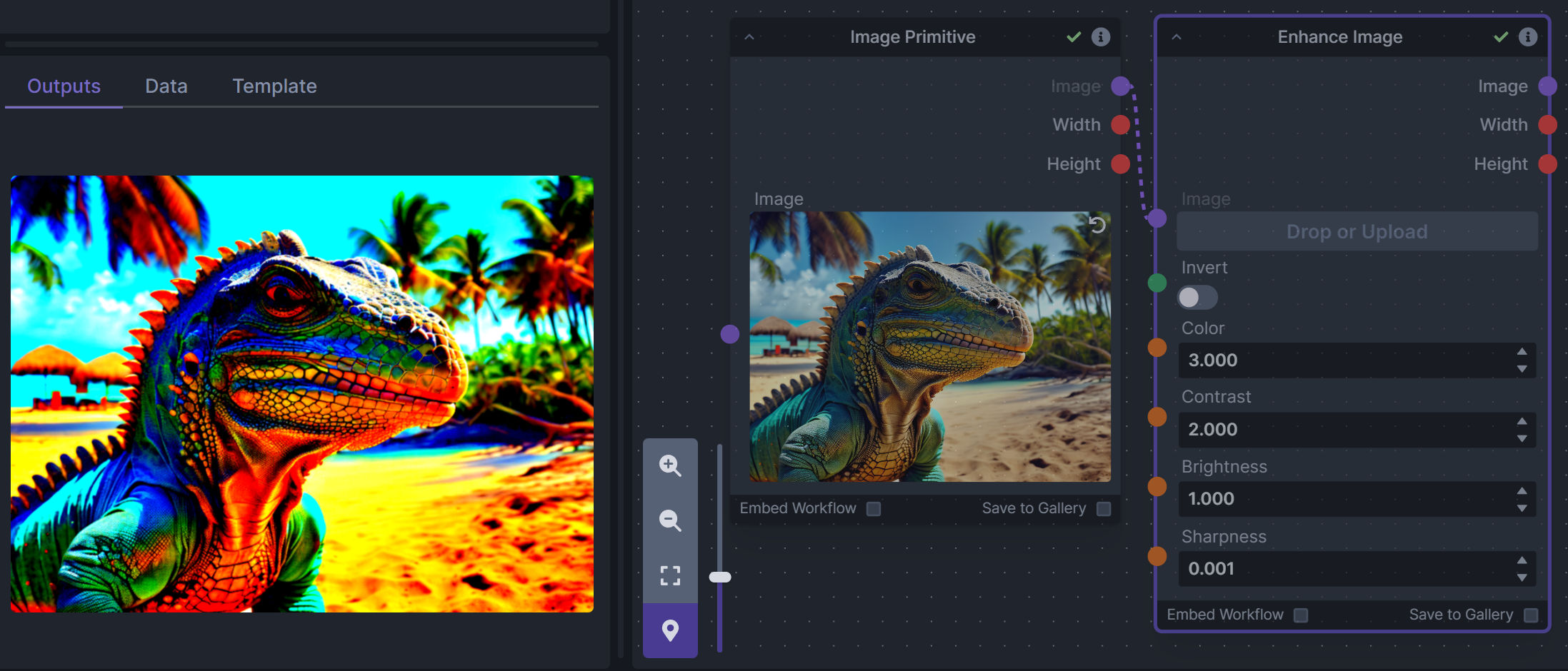

### Enhance Image (simple adjustments)

|

||||

|

||||

**Description:** Boost or reduce color saturation, contrast, brightness, sharpness, or invert colors of any image at any stage with this simple wrapper for pillow [PIL]'s ImageEnhance module.

|

||||

|

||||

Color inversion is toggled with a simple switch, while each of the four enhancer modes are activated by entering a value other than 1 in each corresponding input field. Values less than 1 will reduce the corresponding property, while values greater than 1 will enhance it.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/image-enhance-node

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Generative Grammar-Based Prompt Nodes

|

||||

|

||||

@@ -175,28 +153,16 @@ This includes 3 Nodes:

|

||||

|

||||

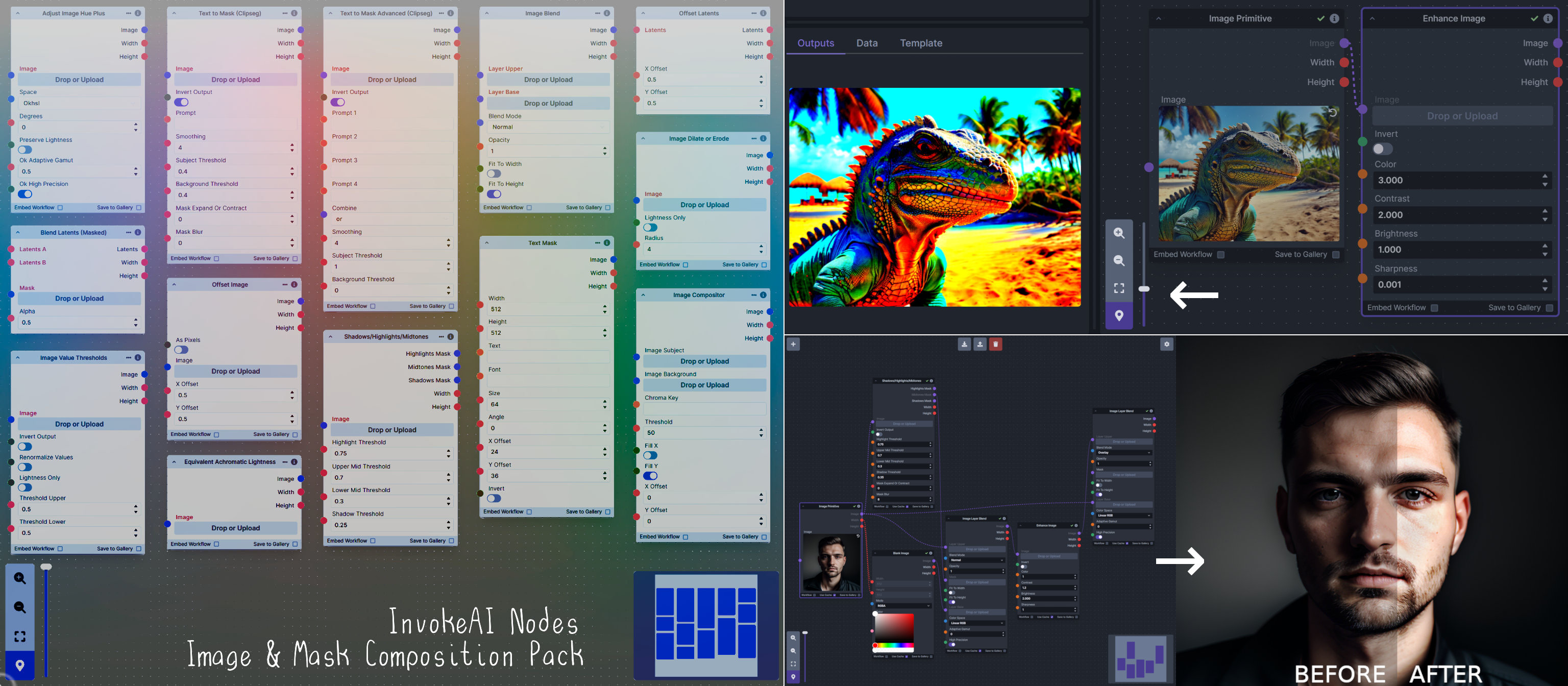

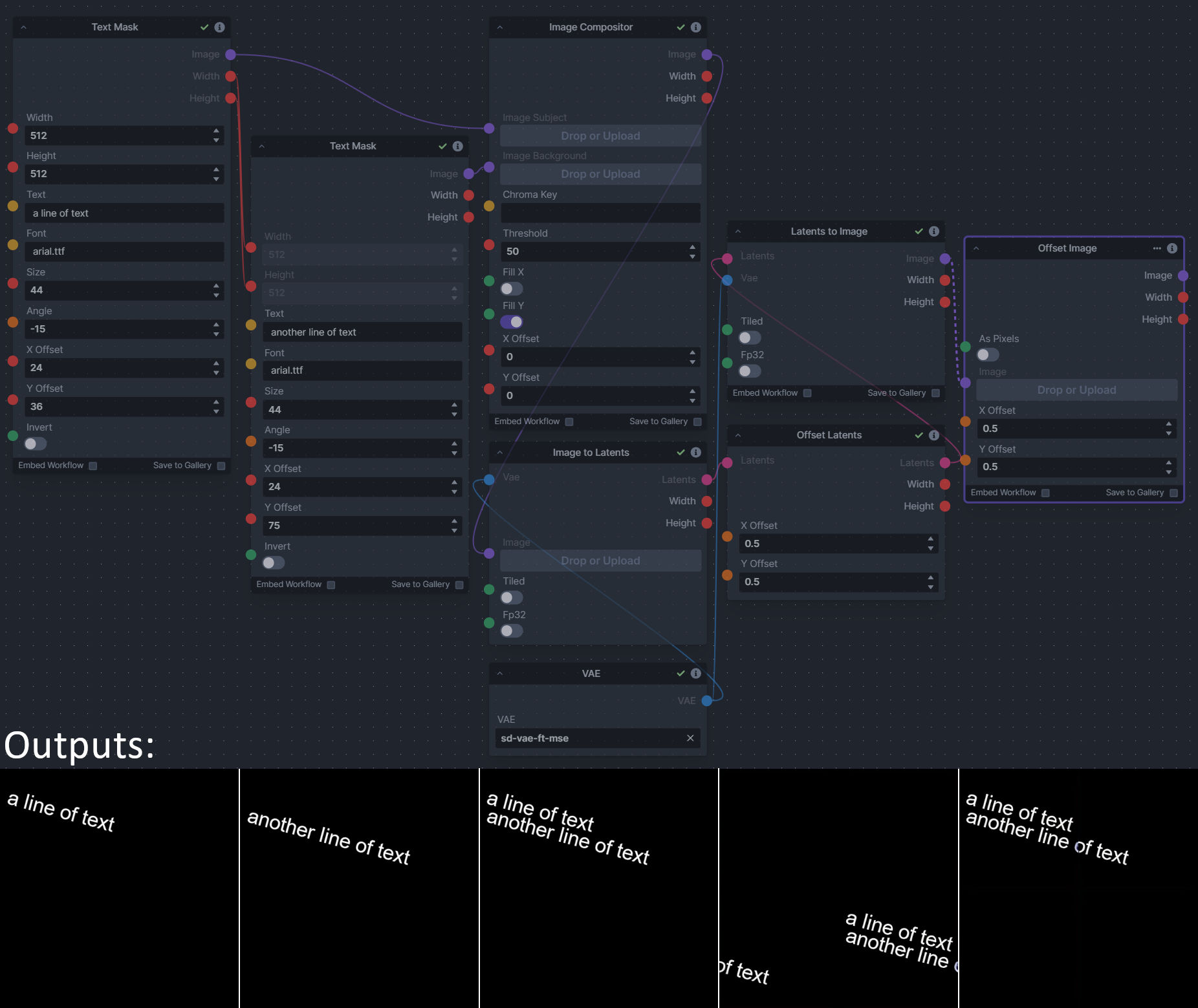

**Description:** This is a pack of nodes for composing masks and images, including a simple text mask creator and both image and latent offset nodes. The offsets wrap around, so these can be used in conjunction with the Seamless node to progressively generate centered on different parts of the seamless tiling.

|

||||

|

||||

This includes 15 Nodes:

|

||||

|

||||

- *Adjust Image Hue Plus* - Rotate the hue of an image in one of several different color spaces.

|

||||

- *Blend Latents/Noise (Masked)* - Use a mask to blend part of one latents tensor [including Noise outputs] into another. Can be used to "renoise" sections during a multi-stage [masked] denoising process.

|

||||

- *Enhance Image* - Boost or reduce color saturation, contrast, brightness, sharpness, or invert colors of any image at any stage with this simple wrapper for pillow [PIL]'s ImageEnhance module.

|

||||

- *Equivalent Achromatic Lightness* - Calculates image lightness accounting for Helmholtz-Kohlrausch effect based on a method described by High, Green, and Nussbaum (2023).

|

||||

- *Text to Mask (Clipseg)* - Input a prompt and an image to generate a mask representing areas of the image matched by the prompt.

|

||||

- *Text to Mask Advanced (Clipseg)* - Output up to four prompt masks combined with logical "and", logical "or", or as separate channels of an RGBA image.

|

||||

- *Image Layer Blend* - Perform a layered blend of two images using alpha compositing. Opacity of top layer is selectable, with optional mask and several different blend modes/color spaces.

|

||||

This includes 4 Nodes:

|

||||

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

|

||||

- *Image Compositor* - Take a subject from an image with a flat backdrop and layer it on another image using a chroma key or flood select background removal.

|

||||

- *Image Dilate or Erode* - Dilate or expand a mask (or any image!). This is equivalent to an expand/contract operation.

|

||||

- *Image Value Thresholds* - Clip an image to pure black/white beyond specified thresholds.

|

||||

- *Offset Latents* - Offset a latents tensor in the vertical and/or horizontal dimensions, wrapping it around.

|

||||

- *Offset Image* - Offset an image in the vertical and/or horizontal dimensions, wrapping it around.

|

||||

- *Rotate/Flip Image* - Rotate an image in degrees clockwise/counterclockwise about its center, optionally resizing the image boundaries to fit, or flipping it about the vertical and/or horizontal axes.

|

||||

- *Shadows/Highlights/Midtones* - Extract three masks (with adjustable hard or soft thresholds) representing shadows, midtones, and highlights regions of an image.

|

||||

- *Text Mask (simple 2D)* - create and position a white on black (or black on white) line of text using any font locally available to Invoke.

|

||||

|

||||

**Node Link:** https://github.com/dwringer/composition-nodes

|

||||

|

||||

**Nodes and Output Examples:**

|

||||

|

||||

**Example Usage:**

|

||||

|

||||

|

||||

--------------------------------

|

||||

### Size Stepper Nodes

|

||||

@@ -264,36 +230,6 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Image to Character Art Image Node's

|

||||

|

||||

**Description:** Group of nodes to convert an input image into ascii/unicode art Image

|

||||

|

||||

**Node Link:** https://github.com/mickr777/imagetoasciiimage

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://github.com/invoke-ai/InvokeAI/assets/115216705/8e061fcc-9a2c-4fa9-bcc7-c0f7b01e9056" width="300" />

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/3c4990eb-2f42-46b9-90f9-0088b939dc6a" width="300" /></br>

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/fee7f800-a4a8-41e2-a66b-c66e4343307e" width="300" />

|

||||

<img src="https://github.com/mickr777/imagetoasciiimage/assets/115216705/1d9c1003-a45f-45c2-aac7-46470bb89330" width="300" />

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Grid to Gif

|

||||

|

||||

**Description:** One node that turns a grid image into an image colletion, one node that turns an image collection into a gif

|

||||

|

||||

**Node Link:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/GridToGif.py

|

||||

|

||||

**Example Node Graph:** https://github.com/mildmisery/invokeai-GridToGifNode/blob/main/Grid%20to%20Gif%20Example%20Workflow.json

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/input.png" width="300" />

|

||||

<img src="https://raw.githubusercontent.com/mildmisery/invokeai-GridToGifNode/main/output.gif" width="300" />

|

||||

|

||||

--------------------------------

|

||||

|

||||

### Example Node Template

|

||||

|

||||

**Description:** This node allows you to do super cool things with InvokeAI.

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# List of Default Nodes

|

||||

|

||||

The table below contains a list of the default nodes shipped with InvokeAI and their descriptions.

|

||||

The table below contains a list of the default nodes shipped with InvokeAI and their descriptions.

|

||||

|

||||

| Node <img width=160 align="right"> | Function |

|

||||

|: ---------------------------------- | :--------------------------------------------------------------------------------------|

|

||||

@@ -17,12 +17,11 @@ The table below contains a list of the default nodes shipped with InvokeAI and t

|

||||

|Conditioning Primitive | A conditioning tensor primitive value|

|

||||

|Content Shuffle Processor | Applies content shuffle processing to image|

|

||||

|ControlNet | Collects ControlNet info to pass to other nodes|

|

||||

|OpenCV Inpaint | Simple inpaint using opencv.|

|

||||

|Denoise Latents | Denoises noisy latents to decodable images|

|

||||

|Divide Integers | Divides two numbers|

|

||||

|Dynamic Prompt | Parses a prompt using adieyal/dynamicprompts' random or combinatorial generator|

|

||||

|[FaceMask](./detailedNodes/faceTools.md#facemask) | Generates masks for faces in an image to use with Inpainting|

|

||||

|[FaceIdentifier](./detailedNodes/faceTools.md#faceidentifier) | Identifies and labels faces in an image|

|

||||

|[FaceOff](./detailedNodes/faceTools.md#faceoff) | Creates a new image that is a scaled bounding box with a mask on the face for Inpainting|

|

||||

|Upscale (RealESRGAN) | Upscales an image using RealESRGAN.|

|

||||

|Float Math | Perform basic math operations on two floats|

|

||||

|Float Primitive Collection | A collection of float primitive values|

|

||||

|Float Primitive | A float primitive value|

|

||||

@@ -77,7 +76,6 @@ The table below contains a list of the default nodes shipped with InvokeAI and t

|

||||

|ONNX Prompt (Raw) | A node to process inputs and produce outputs. May use dependency injection in __init__ to receive providers.|

|

||||

|ONNX Text to Latents | Generates latents from conditionings.|

|

||||

|ONNX Model Loader | Loads a main model, outputting its submodels.|

|

||||

|OpenCV Inpaint | Simple inpaint using opencv.|

|

||||

|Openpose Processor | Applies Openpose processing to image|

|

||||

|PIDI Processor | Applies PIDI processing to image|

|

||||

|Prompts from File | Loads prompts from a text file|

|

||||

@@ -99,6 +97,5 @@ The table below contains a list of the default nodes shipped with InvokeAI and t

|

||||

|String Primitive | A string primitive value|

|

||||

|Subtract Integers | Subtracts two numbers|

|

||||

|Tile Resample Processor | Tile resampler processor|

|

||||

|Upscale (RealESRGAN) | Upscales an image using RealESRGAN.|

|

||||

|VAE Loader | Loads a VAE model, outputting a VaeLoaderOutput|

|

||||

|Zoe (Depth) Processor | Applies Zoe depth processing to image|

|

||||

@@ -1,154 +0,0 @@

|

||||

# Face Nodes

|

||||

|

||||

## FaceOff

|

||||

|

||||

FaceOff mimics a user finding a face in an image and resizing the bounding box

|

||||

around the head in Canvas.

|

||||

|

||||

Enter a face ID (found with FaceIdentifier) to choose which face to mask.

|

||||

|

||||

Just as you would add more context inside the bounding box by making it larger

|

||||

in Canvas, the node gives you a padding input (in pixels) which will

|

||||

simultaneously add more context, and increase the resolution of the bounding box

|

||||

so the face remains the same size inside it.

|

||||

|

||||

The "Minimum Confidence" input defaults to 0.5 (50%), and represents a pass/fail

|

||||

threshold a detected face must reach for it to be processed. Lowering this value

|

||||

may help if detection is failing. If the detected masks are imperfect and stray

|

||||

too far outside/inside of faces, the node gives you X & Y offsets to shrink/grow

|

||||

the masks by a multiplier.

|

||||

|

||||

FaceOff will output the face in a bounded image, taking the face off of the

|

||||

original image for input into any node that accepts image inputs. The node also

|

||||

outputs a face mask with the dimensions of the bounded image. The X & Y outputs

|

||||

are for connecting to the X & Y inputs of the Paste Image node, which will place

|

||||

the bounded image back on the original image using these coordinates.

|

||||

|

||||

###### Inputs/Outputs

|

||||

|

||||

| Input | Description |

|

||||

| ------------------ | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| Image | Image for face detection |

|

||||

| Face ID | The face ID to process, numbered from 0. Multiple faces not supported. Find a face's ID with FaceIdentifier node. |

|

||||

| Minimum Confidence | Minimum confidence for face detection (lower if detection is failing) |

|

||||

| X Offset | X-axis offset of the mask |

|

||||

| Y Offset | Y-axis offset of the mask |

|

||||

| Padding | All-axis padding around the mask in pixels |

|

||||

| Chunk | Chunk (or divide) the image into sections to greatly improve face detection success. Defaults to off, but will activate if no faces are detected normally. Activate to chunk by default. |

|

||||

|

||||

| Output | Description |

|

||||

| ------------- | ------------------------------------------------ |

|

||||

| Bounded Image | Original image bound, cropped, and resized |

|

||||

| Width | The width of the bounded image in pixels |

|

||||

| Height | The height of the bounded image in pixels |

|

||||

| Mask | The output mask |

|

||||

| X | The x coordinate of the bounding box's left side |

|

||||

| Y | The y coordinate of the bounding box's top side |

|

||||

|

||||

## FaceMask

|

||||

|

||||

FaceMask mimics a user drawing masks on faces in an image in Canvas.

|

||||

|

||||

The "Face IDs" input allows the user to select specific faces to be masked.

|

||||

Leave empty to detect and mask all faces, or a comma-separated list for a

|

||||

specific combination of faces (ex: `1,2,4`). A single integer will detect and

|

||||

mask that specific face. Find face IDs with the FaceIdentifier node.

|

||||

|

||||

The "Minimum Confidence" input defaults to 0.5 (50%), and represents a pass/fail

|

||||

threshold a detected face must reach for it to be processed. Lowering this value

|

||||

may help if detection is failing.

|

||||

|

||||

If the detected masks are imperfect and stray too far outside/inside of faces,

|

||||

the node gives you X & Y offsets to shrink/grow the masks by a multiplier. All

|

||||

masks shrink/grow together by the X & Y offset values.

|

||||

|

||||

By default, masks are created to change faces. When masks are inverted, they

|

||||

change surrounding areas, protecting faces.

|

||||

|

||||

###### Inputs/Outputs

|

||||

|

||||

| Input | Description |

|

||||

| ------------------ | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| Image | Image for face detection |

|

||||

| Face IDs | Comma-separated list of face ids to mask eg '0,2,7'. Numbered from 0. Leave empty to mask all. Find face IDs with FaceIdentifier node. |

|

||||

| Minimum Confidence | Minimum confidence for face detection (lower if detection is failing) |

|

||||

| X Offset | X-axis offset of the mask |

|

||||

| Y Offset | Y-axis offset of the mask |

|

||||

| Chunk | Chunk (or divide) the image into sections to greatly improve face detection success. Defaults to off, but will activate if no faces are detected normally. Activate to chunk by default. |

|

||||

| Invert Mask | Toggle to invert the face mask |

|

||||

|

||||

| Output | Description |

|

||||

| ------ | --------------------------------- |

|

||||

| Image | The original image |

|

||||

| Width | The width of the image in pixels |

|

||||

| Height | The height of the image in pixels |

|

||||

| Mask | The output face mask |

|

||||

|

||||

## FaceIdentifier

|

||||

|

||||

FaceIdentifier outputs an image with detected face IDs printed in white numbers

|

||||

onto each face.

|

||||

|

||||

Face IDs can then be used in FaceMask and FaceOff to selectively mask all, a

|

||||

specific combination, or single faces.

|

||||

|

||||

The FaceIdentifier output image is generated for user reference, and isn't meant

|

||||

to be passed on to other image-processing nodes.

|

||||

|

||||

The "Minimum Confidence" input defaults to 0.5 (50%), and represents a pass/fail

|

||||

threshold a detected face must reach for it to be processed. Lowering this value

|

||||

may help if detection is failing. If an image is changed in the slightest, run

|

||||

it through FaceIdentifier again to get updated FaceIDs.

|

||||

|

||||

###### Inputs/Outputs

|

||||

|

||||

| Input | Description |

|

||||

| ------------------ | ---------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------- |

|

||||

| Image | Image for face detection |

|

||||

| Minimum Confidence | Minimum confidence for face detection (lower if detection is failing) |

|

||||

| Chunk | Chunk (or divide) the image into sections to greatly improve face detection success. Defaults to off, but will activate if no faces are detected normally. Activate to chunk by default. |

|

||||

|

||||

| Output | Description |

|

||||

| ------ | ------------------------------------------------------------------------------------------------ |

|

||||

| Image | The original image with small face ID numbers printed in white onto each face for user reference |

|

||||

| Width | The width of the original image in pixels |

|

||||

| Height | The height of the original image in pixels |

|

||||

|

||||

## Tips

|

||||

|

||||

- If not all target faces are being detected, activate Chunk to bypass full

|

||||

image face detection and greatly improve detection success.

|

||||

- Final results will vary between full-image detection and chunking for faces

|

||||

that are detectable by both due to the nature of the process. Try either to

|

||||

your taste.

|

||||

- Be sure Minimum Confidence is set the same when using FaceIdentifier with

|

||||

FaceOff/FaceMask.

|

||||

- For FaceOff, use the color correction node before faceplace to correct edges

|

||||

being noticeable in the final image (see example screenshot).

|

||||

- Non-inpainting models may struggle to paint/generate correctly around faces.

|

||||

- If your face won't change the way you want it to no matter what you change,

|

||||

consider that the change you're trying to make is too much at that resolution.

|

||||

For example, if an image is only 512x768 total, the face might only be 128x128

|

||||

or 256x256, much smaller than the 512x512 your SD1.5 model was probably

|

||||

trained on. Try increasing the resolution of the image by upscaling or

|

||||

resizing, add padding to increase the bounding box's resolution, or use an

|

||||

image where the face takes up more pixels.

|

||||

- If the resulting face seems out of place pasted back on the original image

|

||||

(ie. too large, not proportional), add more padding on the FaceOff node to

|

||||

give inpainting more context. Context and good prompting are important to

|

||||

keeping things proportional.

|

||||

- If you find the mask is too big/small and going too far outside/inside the

|

||||

area you want to affect, adjust the x & y offsets to shrink/grow the mask area

|

||||

- Use a higher denoise start value to resemble aspects of the original face or

|

||||

surroundings. Denoise start = 0 & denoise end = 1 will make something new,

|

||||

while denoise start = 0.50 & denoise end = 1 will be 50% old and 50% new.

|

||||

- mediapipe isn't good at detecting faces with lots of face paint, hair covering

|

||||

the face, etc. Anything that obstructs the face will likely result in no faces

|

||||

being detected.

|

||||

- If you find your face isn't being detected, try lowering the minimum

|

||||

confidence value from 0.5. This could result in false positives, however

|

||||

(random areas being detected as faces and masked).

|

||||

- After altering an image and wanting to process a different face in the newly

|

||||

altered image, run the altered image through FaceIdentifier again to see the

|

||||

new Face IDs. MediaPipe will most likely detect faces in a different order

|

||||

after an image has been changed in the slightest.

|

||||

@@ -9,6 +9,5 @@ If you're interested in finding more workflows, checkout the [#share-your-workfl

|

||||

* [SD1.5 / SD2 Text to Image](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/Text_to_Image.json)

|

||||

* [SDXL Text to Image](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/SDXL_Text_to_Image.json)

|

||||

* [SDXL (with Refiner) Text to Image](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/SDXL_Text_to_Image.json)

|

||||

* [Tiled Upscaling with ControlNet](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/ESRGAN_img2img_upscale w_Canny_ControlNet.json)

|

||||

* [FaceMask](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/FaceMask.json)

|

||||

* [FaceOff with 2x Face Scaling](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/FaceOff_FaceScale2x.json)

|

||||

* [Tiled Upscaling with ControlNet](https://github.com/invoke-ai/InvokeAI/blob/main/docs/workflows/ESRGAN_img2img_upscale w_Canny_ControlNet.json)ß

|

||||

|

||||

|

||||

File diff suppressed because it is too large

Load Diff

File diff suppressed because it is too large

Load Diff

@@ -332,7 +332,6 @@ class InvokeAiInstance:

|

||||

Configure the InvokeAI runtime directory

|

||||

"""

|

||||

|

||||

auto_install = False

|

||||

# set sys.argv to a consistent state

|

||||

new_argv = [sys.argv[0]]

|

||||

for i in range(1, len(sys.argv)):

|

||||

@@ -341,17 +340,13 @@ class InvokeAiInstance:

|

||||

new_argv.append(el)

|

||||

new_argv.append(sys.argv[i + 1])

|

||||

elif el in ["-y", "--yes", "--yes-to-all"]:

|

||||

auto_install = True

|

||||

new_argv.append(el)

|

||||

sys.argv = new_argv

|

||||

|

||||

import messages

|

||||

import requests # to catch download exceptions

|

||||

from messages import introduction

|

||||

|

||||

auto_install = auto_install or messages.user_wants_auto_configuration()

|

||||

if auto_install:

|

||||

sys.argv.append("--yes")

|

||||

else:

|

||||

messages.introduction()

|

||||

introduction()

|

||||

|

||||

from invokeai.frontend.install.invokeai_configure import invokeai_configure

|

||||

|

||||

|

||||

@@ -7,7 +7,7 @@ import os

|

||||

import platform

|

||||

from pathlib import Path

|

||||

|

||||

from prompt_toolkit import HTML, prompt

|

||||

from prompt_toolkit import prompt

|

||||

from prompt_toolkit.completion import PathCompleter

|

||||

from prompt_toolkit.validation import Validator

|

||||

from rich import box, print

|

||||

@@ -65,50 +65,17 @@ def confirm_install(dest: Path) -> bool:

|

||||

if dest.exists():

|

||||

print(f":exclamation: Directory {dest} already exists :exclamation:")

|

||||

dest_confirmed = Confirm.ask(

|

||||

":stop_sign: (re)install in this location?",

|

||||

":stop_sign: Are you sure you want to (re)install in this location?",

|

||||

default=False,

|

||||

)

|

||||

else:

|

||||

print(f"InvokeAI will be installed in {dest}")

|

||||

dest_confirmed = Confirm.ask("Use this location?", default=True)

|

||||

dest_confirmed = not Confirm.ask("Would you like to pick a different location?", default=False)

|

||||

console.line()

|

||||

|

||||

return dest_confirmed

|

||||

|

||||

|

||||

def user_wants_auto_configuration() -> bool:

|

||||

"""Prompt the user to choose between manual and auto configuration."""

|

||||

console.rule("InvokeAI Configuration Section")

|

||||

console.print(

|

||||

Panel(

|

||||

Group(

|

||||

"\n".join(

|

||||

[

|

||||

"Libraries are installed and InvokeAI will now set up its root directory and configuration. Choose between:",

|

||||

"",

|

||||

" * AUTOMATIC configuration: install reasonable defaults and a minimal set of starter models.",

|

||||

" * MANUAL configuration: manually inspect and adjust configuration options and pick from a larger set of starter models.",

|

||||

"",

|

||||

"Later you can fine tune your configuration by selecting option [6] 'Change InvokeAI startup options' from the invoke.bat/invoke.sh launcher script.",

|

||||

]

|

||||

),

|

||||

),

|

||||

box=box.MINIMAL,

|

||||

padding=(1, 1),

|

||||

)

|

||||

)

|

||||

choice = (

|

||||

prompt(

|

||||

HTML("Choose <b><a></b>utomatic or <b><m></b>anual configuration [a/m] (a): "),

|

||||

validator=Validator.from_callable(

|

||||

lambda n: n == "" or n.startswith(("a", "A", "m", "M")), error_message="Please select 'a' or 'm'"

|

||||

),

|

||||

)

|

||||

or "a"

|

||||

)

|

||||

return choice.lower().startswith("a")

|

||||

|

||||

|

||||

def dest_path(dest=None) -> Path:

|

||||

"""

|

||||

Prompt the user for the destination path and create the path

|

||||

|

||||

@@ -49,7 +49,7 @@ def check_internet() -> bool:

|

||||

return False

|

||||

|

||||

|

||||

logger = InvokeAILogger.get_logger()

|

||||

logger = InvokeAILogger.getLogger()

|

||||

|

||||

|

||||

class ApiDependencies:

|

||||

|

||||

@@ -146,8 +146,7 @@ async def update_model(

|

||||

async def import_model(

|

||||

location: str = Body(description="A model path, repo_id or URL to import"),

|

||||

prediction_type: Optional[Literal["v_prediction", "epsilon", "sample"]] = Body(

|

||||

description="Prediction type for SDv2 checkpoints and rare SDv1 checkpoints",

|

||||

default=None,

|

||||

description="Prediction type for SDv2 checkpoint files", default="v_prediction"

|

||||

),

|

||||

) -> ImportModelResponse:

|

||||

"""Add a model using its local path, repo_id, or remote URL. Model characteristics will be probed and configured automatically"""

|

||||

|

||||

@@ -8,6 +8,7 @@ app_config.parse_args()

|

||||

|

||||

if True: # hack to make flake8 happy with imports coming after setting up the config

|

||||

import asyncio

|

||||

import logging

|

||||

import mimetypes

|

||||

import socket

|

||||

from inspect import signature

|

||||

@@ -40,9 +41,7 @@ if True: # hack to make flake8 happy with imports coming after setting up the c

|

||||

import invokeai.backend.util.mps_fixes # noqa: F401 (monkeypatching on import)

|

||||

|

||||

|

||||

app_config = InvokeAIAppConfig.get_config()

|

||||

app_config.parse_args()

|

||||

logger = InvokeAILogger.get_logger(config=app_config)

|

||||

logger = InvokeAILogger.getLogger(config=app_config)

|

||||

|

||||

# fix for windows mimetypes registry entries being borked

|

||||

# see https://github.com/invoke-ai/InvokeAI/discussions/3684#discussioncomment-6391352

|

||||

@@ -224,7 +223,7 @@ def invoke_api():

|

||||

exc_info=e,

|

||||

)

|

||||

else:

|

||||

jurigged.watch(logger=InvokeAILogger.get_logger(name="jurigged").info)

|

||||

jurigged.watch(logger=InvokeAILogger.getLogger(name="jurigged").info)

|

||||

|

||||

port = find_port(app_config.port)

|

||||

if port != app_config.port:

|

||||

@@ -243,7 +242,7 @@ def invoke_api():

|

||||

|

||||

# replace uvicorn's loggers with InvokeAI's for consistent appearance

|

||||

for logname in ["uvicorn.access", "uvicorn"]:

|

||||

log = InvokeAILogger.get_logger(logname)

|

||||

log = logging.getLogger(logname)

|

||||

log.handlers.clear()

|

||||

for ch in logger.handlers:

|

||||

log.addHandler(ch)

|

||||

|

||||

@@ -7,6 +7,8 @@ from .services.config import InvokeAIAppConfig

|

||||

# parse_args() must be called before any other imports. if it is not called first, consumers of the config

|

||||

# which are imported/used before parse_args() is called will get the default config values instead of the

|

||||

# values from the command line or config file.

|

||||

config = InvokeAIAppConfig.get_config()

|

||||

config.parse_args()

|

||||

|

||||

if True: # hack to make flake8 happy with imports coming after setting up the config

|

||||

import argparse

|

||||

@@ -59,9 +61,8 @@ if True: # hack to make flake8 happy with imports coming after setting up the c

|

||||

if torch.backends.mps.is_available():

|

||||

import invokeai.backend.util.mps_fixes # noqa: F401 (monkeypatching on import)

|

||||

|

||||

config = InvokeAIAppConfig.get_config()

|

||||

config.parse_args()

|

||||

logger = InvokeAILogger().get_logger(config=config)

|

||||

|

||||

logger = InvokeAILogger().getLogger(config=config)

|

||||

|

||||

|

||||

class CliCommand(BaseModel):

|

||||

|

||||

@@ -88,12 +88,6 @@ class FieldDescriptions:

|

||||

num_1 = "The first number"

|

||||

num_2 = "The second number"

|

||||

mask = "The mask to use for the operation"

|

||||

board = "The board to save the image to"

|

||||

image = "The image to process"

|

||||

tile_size = "Tile size"

|

||||

inclusive_low = "The inclusive low value"

|

||||

exclusive_high = "The exclusive high value"

|

||||

decimal_places = "The number of decimal places to round to"

|

||||

|

||||

|

||||

class Input(str, Enum):

|

||||

@@ -179,7 +173,6 @@ class UIType(str, Enum):

|

||||

WorkflowField = "WorkflowField"

|

||||

IsIntermediate = "IsIntermediate"

|

||||

MetadataField = "MetadataField"

|

||||

BoardField = "BoardField"

|

||||

# endregion

|

||||

|

||||

|

||||

@@ -663,8 +656,6 @@ def invocation(

|

||||

:param Optional[str] title: Adds a title to the invocation. Use if the auto-generated title isn't quite right. Defaults to None.

|

||||

:param Optional[list[str]] tags: Adds tags to the invocation. Invocations may be searched for by their tags. Defaults to None.

|

||||

:param Optional[str] category: Adds a category to the invocation. Used to group the invocations in the UI. Defaults to None.

|

||||

:param Optional[str] version: Adds a version to the invocation. Must be a valid semver string. Defaults to None.

|

||||

:param Optional[bool] use_cache: Whether or not to use the invocation cache. Defaults to True. The user may override this in the workflow editor.

|

||||

"""

|

||||

|

||||

def wrapper(cls: Type[GenericBaseInvocation]) -> Type[GenericBaseInvocation]:

|

||||

|

||||

@@ -559,33 +559,3 @@ class SamDetectorReproducibleColors(SamDetector):

|

||||

img[:, :] = ann_color

|

||||

final_img.paste(Image.fromarray(img, mode="RGB"), (0, 0), Image.fromarray(np.uint8(m * 255)))

|

||||

return np.array(final_img, dtype=np.uint8)

|

||||

|

||||

|

||||

@invocation(

|

||||

"color_map_image_processor",

|

||||

title="Color Map Processor",

|

||||

tags=["controlnet"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

)

|

||||

class ColorMapImageProcessorInvocation(ImageProcessorInvocation):

|

||||

"""Generates a color map from the provided image"""

|

||||

|

||||

color_map_tile_size: int = InputField(default=64, ge=0, description=FieldDescriptions.tile_size)

|

||||

|

||||

def run_processor(self, image: Image.Image):

|

||||

image = image.convert("RGB")

|

||||

image = np.array(image, dtype=np.uint8)

|

||||

height, width = image.shape[:2]

|

||||

|

||||

width_tile_size = min(self.color_map_tile_size, width)

|

||||

height_tile_size = min(self.color_map_tile_size, height)

|

||||

|

||||

color_map = cv2.resize(

|

||||

image,

|

||||

(width // width_tile_size, height // height_tile_size),

|

||||

interpolation=cv2.INTER_CUBIC,

|

||||

)

|

||||

color_map = cv2.resize(color_map, (width, height), interpolation=cv2.INTER_NEAREST)

|

||||

color_map = Image.fromarray(color_map)

|

||||

return color_map

|

||||

|

||||

@@ -1,692 +0,0 @@

|

||||

import math

|

||||

import re

|

||||

from pathlib import Path

|

||||

from typing import Optional, TypedDict

|

||||

|

||||

import cv2

|

||||

import numpy as np

|

||||

from mediapipe.python.solutions.face_mesh import FaceMesh # type: ignore[import]

|

||||

from PIL import Image, ImageDraw, ImageFilter, ImageFont, ImageOps

|

||||

from PIL.Image import Image as ImageType

|

||||

from pydantic import validator

|

||||

|

||||

import invokeai.assets.fonts as font_assets

|

||||

from invokeai.app.invocations.baseinvocation import (

|

||||

BaseInvocation,

|

||||

InputField,

|

||||

InvocationContext,

|

||||

OutputField,

|

||||

invocation,

|

||||

invocation_output,

|

||||

)

|

||||

from invokeai.app.invocations.primitives import ImageField, ImageOutput

|

||||

from invokeai.app.models.image import ImageCategory, ResourceOrigin

|

||||

|

||||

|

||||

@invocation_output("face_mask_output")

|

||||

class FaceMaskOutput(ImageOutput):

|

||||

"""Base class for FaceMask output"""

|

||||

|

||||

mask: ImageField = OutputField(description="The output mask")

|

||||

|

||||

|

||||

@invocation_output("face_off_output")

|

||||

class FaceOffOutput(ImageOutput):

|

||||

"""Base class for FaceOff Output"""

|

||||

|

||||

mask: ImageField = OutputField(description="The output mask")

|

||||

x: int = OutputField(description="The x coordinate of the bounding box's left side")

|

||||

y: int = OutputField(description="The y coordinate of the bounding box's top side")

|

||||

|

||||

|

||||

class FaceResultData(TypedDict):

|

||||

image: ImageType

|

||||

mask: ImageType

|

||||

x_center: float

|

||||

y_center: float

|

||||

mesh_width: int

|

||||

mesh_height: int

|

||||

|

||||

|

||||

class FaceResultDataWithId(FaceResultData):

|

||||

face_id: int

|

||||

|

||||

|

||||

class ExtractFaceData(TypedDict):

|

||||

bounded_image: ImageType

|

||||

bounded_mask: ImageType

|

||||

x_min: int

|

||||

y_min: int

|

||||

x_max: int

|

||||

y_max: int

|

||||

|

||||

|

||||

class FaceMaskResult(TypedDict):

|

||||

image: ImageType

|

||||

mask: ImageType

|

||||

|

||||

|

||||

def create_white_image(w: int, h: int) -> ImageType:

|

||||

return Image.new("L", (w, h), color=255)

|

||||

|

||||

|

||||

def create_black_image(w: int, h: int) -> ImageType:

|

||||

return Image.new("L", (w, h), color=0)

|

||||

|

||||

|

||||

FONT_SIZE = 32

|

||||

FONT_STROKE_WIDTH = 4

|

||||

|

||||

|

||||

def prepare_faces_list(

|

||||

face_result_list: list[FaceResultData],

|

||||

) -> list[FaceResultDataWithId]:

|

||||

"""Deduplicates a list of faces, adding IDs to them."""

|

||||

deduped_faces: list[FaceResultData] = []

|

||||

|

||||

if len(face_result_list) == 0:

|

||||

return list()

|

||||

|

||||

for candidate in face_result_list:

|

||||

should_add = True

|

||||

candidate_x_center = candidate["x_center"]

|

||||

candidate_y_center = candidate["y_center"]

|

||||

for face in deduped_faces:

|

||||

face_center_x = face["x_center"]

|

||||

face_center_y = face["y_center"]

|

||||

face_radius_w = face["mesh_width"] / 2

|

||||

face_radius_h = face["mesh_height"] / 2

|

||||

# Determine if the center of the candidate_face is inside the ellipse of the added face

|

||||

# p < 1 -> Inside

|

||||

# p = 1 -> Exactly on the ellipse

|

||||

# p > 1 -> Outside

|

||||

p = (math.pow((candidate_x_center - face_center_x), 2) / math.pow(face_radius_w, 2)) + (

|

||||

math.pow((candidate_y_center - face_center_y), 2) / math.pow(face_radius_h, 2)

|

||||

)

|

||||

|

||||

if p < 1: # Inside of the already-added face's radius

|

||||

should_add = False

|

||||

break

|

||||

|

||||

if should_add is True:

|

||||

deduped_faces.append(candidate)

|

||||

|

||||

sorted_faces = sorted(deduped_faces, key=lambda x: x["y_center"])

|

||||

sorted_faces = sorted(sorted_faces, key=lambda x: x["x_center"])

|

||||

|

||||

# add face_id for reference

|

||||

sorted_faces_with_ids: list[FaceResultDataWithId] = []

|

||||

face_id_counter = 0

|

||||

for face in sorted_faces:

|

||||

sorted_faces_with_ids.append(

|

||||

FaceResultDataWithId(

|

||||

**face,

|

||||

face_id=face_id_counter,

|

||||

)

|

||||

)

|

||||

face_id_counter += 1

|

||||

|

||||

return sorted_faces_with_ids

|

||||

|

||||

|

||||

def generate_face_box_mask(

|

||||

context: InvocationContext,

|

||||

minimum_confidence: float,

|

||||

x_offset: float,

|

||||

y_offset: float,

|

||||

pil_image: ImageType,

|

||||

chunk_x_offset: int = 0,

|

||||

chunk_y_offset: int = 0,

|

||||

draw_mesh: bool = True,

|

||||

check_bounds: bool = True,

|

||||

) -> list[FaceResultData]:

|

||||

result = []

|

||||

mask_pil = None

|

||||

|

||||

# Convert the PIL image to a NumPy array.

|

||||

np_image = np.array(pil_image, dtype=np.uint8)

|

||||

|

||||

# Check if the input image has four channels (RGBA).

|

||||

if np_image.shape[2] == 4:

|

||||

# Convert RGBA to RGB by removing the alpha channel.

|

||||

np_image = np_image[:, :, :3]

|

||||

|

||||

# Create a FaceMesh object for face landmark detection and mesh generation.

|

||||

face_mesh = FaceMesh(

|

||||

max_num_faces=999,

|

||||

min_detection_confidence=minimum_confidence,

|

||||

min_tracking_confidence=minimum_confidence,

|

||||

)

|

||||

|

||||

# Detect the face landmarks and mesh in the input image.

|

||||

results = face_mesh.process(np_image)

|

||||

|

||||

# Check if any face is detected.

|

||||

if results.multi_face_landmarks: # type: ignore # this are via protobuf and not typed

|

||||

# Search for the face_id in the detected faces.

|

||||

for face_id, face_landmarks in enumerate(results.multi_face_landmarks): # type: ignore #this are via protobuf and not typed

|

||||

# Get the bounding box of the face mesh.

|

||||

x_coordinates = [landmark.x for landmark in face_landmarks.landmark]

|

||||

y_coordinates = [landmark.y for landmark in face_landmarks.landmark]

|

||||

x_min, x_max = min(x_coordinates), max(x_coordinates)

|

||||

y_min, y_max = min(y_coordinates), max(y_coordinates)

|

||||

|

||||

# Calculate the width and height of the face mesh.

|

||||

mesh_width = int((x_max - x_min) * np_image.shape[1])

|

||||

mesh_height = int((y_max - y_min) * np_image.shape[0])

|

||||

|

||||

# Get the center of the face.

|

||||

x_center = np.mean([landmark.x * np_image.shape[1] for landmark in face_landmarks.landmark])

|

||||

y_center = np.mean([landmark.y * np_image.shape[0] for landmark in face_landmarks.landmark])

|

||||

|

||||

face_landmark_points = np.array(

|

||||

[

|

||||

[landmark.x * np_image.shape[1], landmark.y * np_image.shape[0]]

|

||||

for landmark in face_landmarks.landmark

|

||||

]

|

||||

)

|

||||

|

||||

# Apply the scaling offsets to the face landmark points with a multiplier.

|

||||

scale_multiplier = 0.2

|

||||

x_center = np.mean(face_landmark_points[:, 0])

|

||||

y_center = np.mean(face_landmark_points[:, 1])

|

||||

|

||||

if draw_mesh:

|

||||

x_scaled = face_landmark_points[:, 0] + scale_multiplier * x_offset * (

|

||||

face_landmark_points[:, 0] - x_center

|

||||

)

|

||||

y_scaled = face_landmark_points[:, 1] + scale_multiplier * y_offset * (

|

||||

face_landmark_points[:, 1] - y_center

|

||||

)

|

||||

|

||||

convex_hull = cv2.convexHull(np.column_stack((x_scaled, y_scaled)).astype(np.int32))

|

||||

|

||||

# Generate a binary face mask using the face mesh.

|

||||

mask_image = np.ones(np_image.shape[:2], dtype=np.uint8) * 255

|

||||

cv2.fillConvexPoly(mask_image, convex_hull, 0)

|

||||

|

||||

# Convert the binary mask image to a PIL Image.

|

||||

init_mask_pil = Image.fromarray(mask_image, mode="L")

|

||||

w, h = init_mask_pil.size

|

||||

mask_pil = create_white_image(w + chunk_x_offset, h + chunk_y_offset)

|

||||

mask_pil.paste(init_mask_pil, (chunk_x_offset, chunk_y_offset))

|

||||

|

||||

left_side = x_center - mesh_width

|

||||

right_side = x_center + mesh_width

|

||||

top_side = y_center - mesh_height

|

||||

bottom_side = y_center + mesh_height

|

||||

im_width, im_height = pil_image.size

|

||||

over_w = im_width * 0.1

|

||||

over_h = im_height * 0.1

|

||||

if not check_bounds or (

|

||||

(left_side >= -over_w)

|

||||

and (right_side < im_width + over_w)

|

||||

and (top_side >= -over_h)

|

||||

and (bottom_side < im_height + over_h)

|

||||

):

|

||||

x_center = float(x_center)

|

||||

y_center = float(y_center)

|

||||

face = FaceResultData(

|

||||

image=pil_image,

|

||||

mask=mask_pil or create_white_image(*pil_image.size),

|

||||

x_center=x_center + chunk_x_offset,

|

||||

y_center=y_center + chunk_y_offset,

|

||||

mesh_width=mesh_width,

|

||||

mesh_height=mesh_height,

|

||||

)

|

||||

|

||||

result.append(face)

|

||||

else:

|

||||

context.services.logger.info("FaceTools --> Face out of bounds, ignoring.")

|

||||

|

||||

return result

|

||||

|

||||

|

||||

def extract_face(

|

||||

context: InvocationContext,

|

||||

image: ImageType,

|

||||

face: FaceResultData,

|

||||

padding: int,

|

||||

) -> ExtractFaceData:

|

||||

mask = face["mask"]

|

||||

center_x = face["x_center"]

|

||||

center_y = face["y_center"]

|

||||

mesh_width = face["mesh_width"]

|

||||

mesh_height = face["mesh_height"]

|

||||

|

||||

# Determine the minimum size of the square crop

|

||||

min_size = min(mask.width, mask.height)

|

||||

|

||||

# Calculate the crop boundaries for the output image and mask.

|

||||

mesh_width += 128 + padding # add pixels to account for mask variance

|

||||

mesh_height += 128 + padding # add pixels to account for mask variance

|

||||

crop_size = min(

|

||||

max(mesh_width, mesh_height, 128), min_size

|

||||

) # Choose the smaller of the two (given value or face mask size)

|

||||

if crop_size > 128:

|

||||

crop_size = (crop_size + 7) // 8 * 8 # Ensure crop side is multiple of 8

|

||||

|

||||

# Calculate the actual crop boundaries within the bounds of the original image.

|

||||

x_min = int(center_x - crop_size / 2)

|

||||

y_min = int(center_y - crop_size / 2)

|

||||

x_max = int(center_x + crop_size / 2)

|

||||

y_max = int(center_y + crop_size / 2)

|

||||

|

||||

# Adjust the crop boundaries to stay within the original image's dimensions

|

||||

if x_min < 0:

|

||||

context.services.logger.warning("FaceTools --> -X-axis padding reached image edge.")

|

||||

x_max -= x_min

|

||||

x_min = 0

|

||||

elif x_max > mask.width:

|

||||

context.services.logger.warning("FaceTools --> +X-axis padding reached image edge.")

|

||||

x_min -= x_max - mask.width

|

||||