mirror of

https://github.com/invoke-ai/InvokeAI.git

synced 2026-01-23 09:28:01 -05:00

Compare commits

8 Commits

v5.4.0

...

ryan/flux-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

766c6082a3 | ||

|

|

ad5d528204 | ||

|

|

536ccf071c | ||

|

|

ca13c3b12f | ||

|

|

d54c1ef9ba | ||

|

|

e9f722aa7d | ||

|

|

139da133bd | ||

|

|

57820929d5 |

@@ -105,7 +105,7 @@ Invoke features an organized gallery system for easily storing, accessing, and r

|

||||

### Other features

|

||||

|

||||

- Support for both ckpt and diffusers models

|

||||

- SD1.5, SD2.0, SDXL, and FLUX support

|

||||

- SD1.5, SD2.0, and SDXL support

|

||||

- Upscaling Tools

|

||||

- Embedding Manager & Support

|

||||

- Model Manager & Support

|

||||

|

||||

@@ -38,9 +38,9 @@ RUN --mount=type=cache,target=/root/.cache/pip \

|

||||

if [ "$TARGETPLATFORM" = "linux/arm64" ] || [ "$GPU_DRIVER" = "cpu" ]; then \

|

||||

extra_index_url_arg="--extra-index-url https://download.pytorch.org/whl/cpu"; \

|

||||

elif [ "$GPU_DRIVER" = "rocm" ]; then \

|

||||

extra_index_url_arg="--extra-index-url https://download.pytorch.org/whl/rocm6.1"; \

|

||||

extra_index_url_arg="--extra-index-url https://download.pytorch.org/whl/rocm5.6"; \

|

||||

else \

|

||||

extra_index_url_arg="--extra-index-url https://download.pytorch.org/whl/cu124"; \

|

||||

extra_index_url_arg="--extra-index-url https://download.pytorch.org/whl/cu121"; \

|

||||

fi &&\

|

||||

|

||||

# xformers + triton fails to install on arm64

|

||||

|

||||

@@ -1,7 +1,7 @@

|

||||

# Copyright (c) 2023 Eugene Brodsky https://github.com/ebr

|

||||

|

||||

x-invokeai: &invokeai

|

||||

image: "ghcr.io/invoke-ai/invokeai:latest"

|

||||

image: "local/invokeai:latest"

|

||||

build:

|

||||

context: ..

|

||||

dockerfile: docker/Dockerfile

|

||||

|

||||

@@ -144,7 +144,7 @@ As you might have noticed, we added two new arguments to the `InputField`

|

||||

definition for `width` and `height`, called `gt` and `le`. They stand for

|

||||

_greater than or equal to_ and _less than or equal to_.

|

||||

|

||||

These impose constraints on those fields, and will raise an exception if the

|

||||

These impose contraints on those fields, and will raise an exception if the

|

||||

values do not meet the constraints. Field constraints are provided by

|

||||

**pydantic**, so anything you see in the **pydantic docs** will work.

|

||||

|

||||

|

||||

@@ -239,7 +239,7 @@ Consult the

|

||||

get it set up.

|

||||

|

||||

Suggest using VSCode's included settings sync so that your remote dev host has

|

||||

all the same app settings and extensions automatically.

|

||||

all the same app settings and extensions automagically.

|

||||

|

||||

##### One remote dev gotcha

|

||||

|

||||

|

||||

@@ -2,7 +2,7 @@

|

||||

|

||||

## **What do I need to know to help?**

|

||||

|

||||

If you are looking to help with a code contribution, InvokeAI uses several different technologies under the hood: Python (Pydantic, FastAPI, diffusers) and Typescript (React, Redux Toolkit, ChakraUI, Mantine, Konva). Familiarity with StableDiffusion and image generation concepts is helpful, but not essential.

|

||||

If you are looking to help to with a code contribution, InvokeAI uses several different technologies under the hood: Python (Pydantic, FastAPI, diffusers) and Typescript (React, Redux Toolkit, ChakraUI, Mantine, Konva). Familiarity with StableDiffusion and image generation concepts is helpful, but not essential.

|

||||

|

||||

|

||||

## **Get Started**

|

||||

|

||||

@@ -5,7 +5,7 @@ If you're a new contributor to InvokeAI or Open Source Projects, this is the gui

|

||||

## New Contributor Checklist

|

||||

|

||||

- [x] Set up your local development environment & fork of InvokAI by following [the steps outlined here](../dev-environment.md)

|

||||

- [x] Set up your local tooling with [this guide](../LOCAL_DEVELOPMENT.md). Feel free to skip this step if you already have tooling you're comfortable with.

|

||||

- [x] Set up your local tooling with [this guide](InvokeAI/contributing/LOCAL_DEVELOPMENT/#developing-invokeai-in-vscode). Feel free to skip this step if you already have tooling you're comfortable with.

|

||||

- [x] Familiarize yourself with [Git](https://www.atlassian.com/git) & our project structure by reading through the [development documentation](development.md)

|

||||

- [x] Join the [#dev-chat](https://discord.com/channels/1020123559063990373/1049495067846524939) channel of the Discord

|

||||

- [x] Choose an issue to work on! This can be achieved by asking in the #dev-chat channel, tackling a [good first issue](https://github.com/invoke-ai/InvokeAI/contribute) or finding an item on the [roadmap](https://github.com/orgs/invoke-ai/projects/7). If nothing in any of those places catches your eye, feel free to work on something of interest to you!

|

||||

|

||||

@@ -1,6 +1,6 @@

|

||||

# Tutorials

|

||||

|

||||

Tutorials help new & existing users expand their ability to use InvokeAI to the full extent of our features and services.

|

||||

Tutorials help new & existing users expand their abilty to use InvokeAI to the full extent of our features and services.

|

||||

|

||||

Currently, we have a set of tutorials available on our [YouTube channel](https://www.youtube.com/@invokeai), but as InvokeAI continues to evolve with new updates, we want to ensure that we are giving our users the resources they need to succeed.

|

||||

|

||||

@@ -8,4 +8,4 @@ Tutorials can be in the form of videos or article walkthroughs on a subject of y

|

||||

|

||||

## Contributing

|

||||

|

||||

Please reach out to @imic or @hipsterusername on [Discord](https://discord.gg/ZmtBAhwWhy) to help create tutorials for InvokeAI.

|

||||

Please reach out to @imic or @hipsterusername on [Discord](https://discord.gg/ZmtBAhwWhy) to help create tutorials for InvokeAI.

|

||||

@@ -17,49 +17,46 @@ If you just want to use Invoke, you should use the [installer][installer link].

|

||||

## Setup

|

||||

|

||||

1. Run through the [requirements][requirements link].

|

||||

2. [Fork and clone][forking link] the [InvokeAI repo][repo link].

|

||||

3. Create an directory for user data (images, models, db, etc). This is typically at `~/invokeai`, but if you already have a non-dev install, you may want to create a separate directory for the dev install.

|

||||

4. Create a python virtual environment inside the directory you just created:

|

||||

1. [Fork and clone][forking link] the [InvokeAI repo][repo link].

|

||||

1. Create an directory for user data (images, models, db, etc). This is typically at `~/invokeai`, but if you already have a non-dev install, you may want to create a separate directory for the dev install.

|

||||

1. Create a python virtual environment inside the directory you just created:

|

||||

|

||||

```sh

|

||||

python3 -m venv .venv --prompt InvokeAI-Dev

|

||||

```

|

||||

```sh

|

||||

python3 -m venv .venv --prompt InvokeAI-Dev

|

||||

```

|

||||

|

||||

5. Activate the venv (you'll need to do this every time you want to run the app):

|

||||

1. Activate the venv (you'll need to do this every time you want to run the app):

|

||||

|

||||

```sh

|

||||

source .venv/bin/activate

|

||||

```

|

||||

```sh

|

||||

source .venv/bin/activate

|

||||

```

|

||||

|

||||

6. Install the repo as an [editable install][editable install link]:

|

||||

1. Install the repo as an [editable install][editable install link]:

|

||||

|

||||

```sh

|

||||

pip install -e ".[dev,test,xformers]" --use-pep517 --extra-index-url https://download.pytorch.org/whl/cu121

|

||||

```

|

||||

```sh

|

||||

pip install -e ".[dev,test,xformers]" --use-pep517 --extra-index-url https://download.pytorch.org/whl/cu121

|

||||

```

|

||||

|

||||

Refer to the [manual installation][manual install link]] instructions for more determining the correct install options. `xformers` is optional, but `dev` and `test` are not.

|

||||

Refer to the [manual installation][manual install link]] instructions for more determining the correct install options. `xformers` is optional, but `dev` and `test` are not.

|

||||

|

||||

7. Install the frontend dev toolchain:

|

||||

1. Install the frontend dev toolchain:

|

||||

|

||||

- [`nodejs`](https://nodejs.org/) (recommend v20 LTS)

|

||||

- [`pnpm`](https://pnpm.io/8.x/installation) (must be v8 - not v9!)

|

||||

- [`pnpm`](https://pnpm.io/installation#installing-a-specific-version) (must be v8 - not v9!)

|

||||

|

||||

8. Do a production build of the frontend:

|

||||

1. Do a production build of the frontend:

|

||||

|

||||

```sh

|

||||

cd PATH_TO_INVOKEAI_REPO/invokeai/frontend/web

|

||||

pnpm i

|

||||

pnpm build

|

||||

```

|

||||

```sh

|

||||

pnpm build

|

||||

```

|

||||

|

||||

9. Start the application:

|

||||

1. Start the application:

|

||||

|

||||

```sh

|

||||

cd PATH_TO_INVOKEAI_REPO

|

||||

python scripts/invokeai-web.py

|

||||

```

|

||||

```sh

|

||||

python scripts/invokeai-web.py

|

||||

```

|

||||

|

||||

10. Access the UI at `localhost:9090`.

|

||||

1. Access the UI at `localhost:9090`.

|

||||

|

||||

## Updating the UI

|

||||

|

||||

|

||||

@@ -209,7 +209,7 @@ checkpoint models.

|

||||

|

||||

To solve this, go to the Model Manager tab (the cube), select the

|

||||

checkpoint model that's giving you trouble, and press the "Convert"

|

||||

button in the upper right of your browser window. This will convert the

|

||||

button in the upper right of your browser window. This will conver the

|

||||

checkpoint into a diffusers model, after which loading should be

|

||||

faster and less memory-intensive.

|

||||

|

||||

|

||||

@@ -97,16 +97,16 @@ Prior to installing PyPatchMatch, you need to take the following steps:

|

||||

sudo pacman -S --needed base-devel

|

||||

```

|

||||

|

||||

2. Install `opencv`, `blas`, and required dependencies:

|

||||

2. Install `opencv` and `blas`:

|

||||

|

||||

```sh

|

||||

sudo pacman -S opencv blas fmt glew vtk hdf5

|

||||

sudo pacman -S opencv blas

|

||||

```

|

||||

|

||||

or for CUDA support

|

||||

|

||||

```sh

|

||||

sudo pacman -S opencv-cuda blas fmt glew vtk hdf5

|

||||

sudo pacman -S opencv-cuda blas

|

||||

```

|

||||

|

||||

3. Fix the naming of the `opencv` package configuration file:

|

||||

|

||||

@@ -21,7 +21,6 @@ To use a community workflow, download the `.json` node graph file and load it in

|

||||

+ [Clothing Mask](#clothing-mask)

|

||||

+ [Contrast Limited Adaptive Histogram Equalization](#contrast-limited-adaptive-histogram-equalization)

|

||||

+ [Depth Map from Wavefront OBJ](#depth-map-from-wavefront-obj)

|

||||

+ [Enhance Detail](#enhance-detail)

|

||||

+ [Film Grain](#film-grain)

|

||||

+ [Generative Grammar-Based Prompt Nodes](#generative-grammar-based-prompt-nodes)

|

||||

+ [GPT2RandomPromptMaker](#gpt2randompromptmaker)

|

||||

@@ -40,9 +39,7 @@ To use a community workflow, download the `.json` node graph file and load it in

|

||||

+ [Match Histogram](#match-histogram)

|

||||

+ [Metadata-Linked](#metadata-linked-nodes)

|

||||

+ [Negative Image](#negative-image)

|

||||

+ [Nightmare Promptgen](#nightmare-promptgen)

|

||||

+ [Ollama](#ollama-node)

|

||||

+ [One Button Prompt](#one-button-prompt)

|

||||

+ [Nightmare Promptgen](#nightmare-promptgen)

|

||||

+ [Oobabooga](#oobabooga)

|

||||

+ [Prompt Tools](#prompt-tools)

|

||||

+ [Remote Image](#remote-image)

|

||||

@@ -82,7 +79,7 @@ Note: These are inherited from the core nodes so any update to the core nodes sh

|

||||

|

||||

**Example Usage:**

|

||||

</br>

|

||||

<img src="https://raw.githubusercontent.com/skunkworxdark/autostereogram_nodes/refs/heads/main/images/spider.png" width="200" /> -> <img src="https://raw.githubusercontent.com/skunkworxdark/autostereogram_nodes/refs/heads/main/images/spider-depth.png" width="200" /> -> <img src="https://raw.githubusercontent.com/skunkworxdark/autostereogram_nodes/refs/heads/main/images/spider-dots.png" width="200" /> <img src="https://raw.githubusercontent.com/skunkworxdark/autostereogram_nodes/refs/heads/main/images/spider-pattern.png" width="200" />

|

||||

<img src="https://github.com/skunkworxdark/autostereogram_nodes/blob/main/images/spider.png" width="200" /> -> <img src="https://github.com/skunkworxdark/autostereogram_nodes/blob/main/images/spider-depth.png" width="200" /> -> <img src="https://github.com/skunkworxdark/autostereogram_nodes/raw/main/images/spider-dots.png" width="200" /> <img src="https://github.com/skunkworxdark/autostereogram_nodes/raw/main/images/spider-pattern.png" width="200" />

|

||||

|

||||

--------------------------------

|

||||

### Average Images

|

||||

@@ -143,17 +140,6 @@ To be imported, an .obj must use triangulated meshes, so make sure to enable tha

|

||||

**Example Usage:**

|

||||

</br><img src="https://raw.githubusercontent.com/dwringer/depth-from-obj-node/main/depth_from_obj_usage.jpg" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### Enhance Detail

|

||||

|

||||

**Description:** A single node that can enhance the detail in an image. Increase or decrease details in an image using a guided filter (as opposed to the typical Gaussian blur used by most sharpening filters.) Based on the `Enhance Detail` ComfyUI node from https://github.com/spacepxl/ComfyUI-Image-Filters

|

||||

|

||||

**Node Link:** https://github.com/skunkworxdark/enhance-detail-node

|

||||

|

||||

**Example Usage:**

|

||||

</br>

|

||||

<img src="https://raw.githubusercontent.com/skunkworxdark/enhance-detail-node/refs/heads/main/images/Comparison.png" />

|

||||

|

||||

--------------------------------

|

||||

### Film Grain

|

||||

|

||||

@@ -320,7 +306,7 @@ View:

|

||||

**Node Link:** https://github.com/helix4u/load_video_frame

|

||||

|

||||

**Output Example:**

|

||||

<img src="https://raw.githubusercontent.com/helix4u/load_video_frame/refs/heads/main/_git_assets/dance1736978273.gif" width="500" />

|

||||

<img src="https://raw.githubusercontent.com/helix4u/load_video_frame/main/_git_assets/testmp4_embed_converted.gif" width="500" />

|

||||

|

||||

--------------------------------

|

||||

### Make 3D

|

||||

@@ -361,7 +347,7 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://github.com/skunkworxdark/match_histogram/assets/21961335/ed12f329-a0ef-444a-9bae-129ed60d6097" />

|

||||

<img src="https://github.com/skunkworxdark/match_histogram/assets/21961335/ed12f329-a0ef-444a-9bae-129ed60d6097" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Metadata Linked Nodes

|

||||

@@ -403,34 +389,6 @@ View:

|

||||

|

||||

**Node Link:** [https://github.com/gogurtenjoyer/nightmare-promptgen](https://github.com/gogurtenjoyer/nightmare-promptgen)

|

||||

|

||||

--------------------------------

|

||||

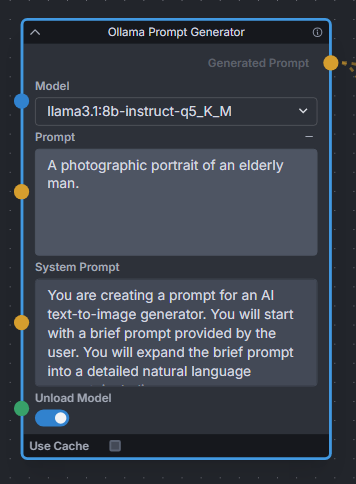

### Ollama Node

|

||||

|

||||

**Description:** Uses Ollama API to expand text prompts for text-to-image generation using local LLMs. Works great for expanding basic prompts into detailed natural language prompts for Flux. Also provides a toggle to unload the LLM model immediately after expanding, to free up VRAM for Invoke to continue the image generation workflow.

|

||||

|

||||

**Node Link:** https://github.com/Jonseed/Ollama-Node

|

||||

|

||||

**Example Node Graph:** https://github.com/Jonseed/Ollama-Node/blob/main/Ollama-Node-Flux-example.json

|

||||

|

||||

**View:**

|

||||

|

||||

|

||||

|

||||

--------------------------------

|

||||

### One Button Prompt

|

||||

|

||||

<img src="https://raw.githubusercontent.com/AIrjen/OneButtonPrompt_X_InvokeAI/refs/heads/main/images/background.png" width="800" />

|

||||

|

||||

**Description:** an extensive suite of auto prompt generation and prompt helper nodes based on extensive logic. Get creative with the best prompt generator in the world.

|

||||

|

||||

The main node generates interesting prompts based on a set of parameters. There are also some additional nodes such as Auto Negative Prompt, One Button Artify, Create Prompt Variant and other cool prompt toys to play around with.

|

||||

|

||||

**Node Link:** [https://github.com/AIrjen/OneButtonPrompt_X_InvokeAI](https://github.com/AIrjen/OneButtonPrompt_X_InvokeAI)

|

||||

|

||||

**Nodes:**

|

||||

|

||||

<img src="https://raw.githubusercontent.com/AIrjen/OneButtonPrompt_X_InvokeAI/refs/heads/main/images/OBP_nodes_invokeai.png" width="800" />

|

||||

|

||||

--------------------------------

|

||||

### Oobabooga

|

||||

|

||||

@@ -482,7 +440,7 @@ See full docs here: https://github.com/skunkworxdark/Prompt-tools-nodes/edit/mai

|

||||

|

||||

**Workflow Examples**

|

||||

|

||||

<img src="https://raw.githubusercontent.com/skunkworxdark/prompt-tools/refs/heads/main/images/CSVToIndexStringNode.png"/>

|

||||

<img src="https://github.com/skunkworxdark/prompt-tools/blob/main/images/CSVToIndexStringNode.png" width="300" />

|

||||

|

||||

--------------------------------

|

||||

### Remote Image

|

||||

@@ -620,7 +578,7 @@ See full docs here: https://github.com/skunkworxdark/XYGrid_nodes/edit/main/READ

|

||||

|

||||

**Output Examples**

|

||||

|

||||

<img src="https://raw.githubusercontent.com/skunkworxdark/XYGrid_nodes/refs/heads/main/images/collage.png" />

|

||||

<img src="https://github.com/skunkworxdark/XYGrid_nodes/blob/main/images/collage.png" width="300" />

|

||||

|

||||

|

||||

--------------------------------

|

||||

|

||||

6

flake.lock

generated

6

flake.lock

generated

@@ -2,11 +2,11 @@

|

||||

"nodes": {

|

||||

"nixpkgs": {

|

||||

"locked": {

|

||||

"lastModified": 1727955264,

|

||||

"narHash": "sha256-lrd+7mmb5NauRoMa8+J1jFKYVa+rc8aq2qc9+CxPDKc=",

|

||||

"lastModified": 1690630721,

|

||||

"narHash": "sha256-Y04onHyBQT4Erfr2fc82dbJTfXGYrf4V0ysLUYnPOP8=",

|

||||

"owner": "NixOS",

|

||||

"repo": "nixpkgs",

|

||||

"rev": "71cd616696bd199ef18de62524f3df3ffe8b9333",

|

||||

"rev": "d2b52322f35597c62abf56de91b0236746b2a03d",

|

||||

"type": "github"

|

||||

},

|

||||

"original": {

|

||||

|

||||

@@ -34,7 +34,7 @@

|

||||

cudaPackages.cudnn

|

||||

cudaPackages.cuda_nvrtc

|

||||

cudatoolkit

|

||||

pkg-config

|

||||

pkgconfig

|

||||

libconfig

|

||||

cmake

|

||||

blas

|

||||

@@ -66,7 +66,7 @@

|

||||

black

|

||||

|

||||

# Frontend.

|

||||

pnpm_8

|

||||

yarn

|

||||

nodejs

|

||||

];

|

||||

LD_LIBRARY_PATH = pkgs.lib.makeLibraryPath buildInputs;

|

||||

|

||||

@@ -12,7 +12,7 @@ MINIMUM_PYTHON_VERSION=3.10.0

|

||||

MAXIMUM_PYTHON_VERSION=3.11.100

|

||||

PYTHON=""

|

||||

for candidate in python3.11 python3.10 python3 python ; do

|

||||

if ppath=`which $candidate 2>/dev/null`; then

|

||||

if ppath=`which $candidate`; then

|

||||

# when using `pyenv`, the executable for an inactive Python version will exist but will not be operational

|

||||

# we check that this found executable can actually run

|

||||

if [ $($candidate --version &>/dev/null; echo ${PIPESTATUS}) -gt 0 ]; then continue; fi

|

||||

@@ -30,11 +30,10 @@ done

|

||||

if [ -z "$PYTHON" ]; then

|

||||

echo "A suitable Python interpreter could not be found"

|

||||

echo "Please install Python $MINIMUM_PYTHON_VERSION or higher (maximum $MAXIMUM_PYTHON_VERSION) before running this script. See instructions at $INSTRUCTIONS for help."

|

||||

echo "For the best user experience we suggest enlarging or maximizing this window now."

|

||||

read -p "Press any key to exit"

|

||||

exit -1

|

||||

fi

|

||||

|

||||

echo "For the best user experience we suggest enlarging or maximizing this window now."

|

||||

|

||||

exec $PYTHON ./lib/main.py ${@}

|

||||

read -p "Press any key to exit"

|

||||

|

||||

@@ -245,9 +245,6 @@ class InvokeAiInstance:

|

||||

|

||||

pip = local[self.pip]

|

||||

|

||||

# Uninstall xformers if it is present; the correct version of it will be reinstalled if needed

|

||||

_ = pip["uninstall", "-yqq", "xformers"] & FG

|

||||

|

||||

pipeline = pip[

|

||||

"install",

|

||||

"--require-virtualenv",

|

||||

@@ -285,6 +282,12 @@ class InvokeAiInstance:

|

||||

shutil.copy(src, dest)

|

||||

os.chmod(dest, 0o0755)

|

||||

|

||||

def update(self):

|

||||

pass

|

||||

|

||||

def remove(self):

|

||||

pass

|

||||

|

||||

|

||||

### Utility functions ###

|

||||

|

||||

@@ -399,7 +402,7 @@ def get_torch_source() -> Tuple[str | None, str | None]:

|

||||

:rtype: list

|

||||

"""

|

||||

|

||||

from messages import GpuType, select_gpu

|

||||

from messages import select_gpu

|

||||

|

||||

# device can be one of: "cuda", "rocm", "cpu", "cuda_and_dml, autodetect"

|

||||

device = select_gpu()

|

||||

@@ -409,22 +412,16 @@ def get_torch_source() -> Tuple[str | None, str | None]:

|

||||

url = None

|

||||

optional_modules: str | None = None

|

||||

if OS == "Linux":

|

||||

if device == GpuType.ROCM:

|

||||

url = "https://download.pytorch.org/whl/rocm6.1"

|

||||

elif device == GpuType.CPU:

|

||||

if device.value == "rocm":

|

||||

url = "https://download.pytorch.org/whl/rocm5.6"

|

||||

elif device.value == "cpu":

|

||||

url = "https://download.pytorch.org/whl/cpu"

|

||||

elif device == GpuType.CUDA:

|

||||

url = "https://download.pytorch.org/whl/cu124"

|

||||

optional_modules = "[onnx-cuda]"

|

||||

elif device == GpuType.CUDA_WITH_XFORMERS:

|

||||

url = "https://download.pytorch.org/whl/cu124"

|

||||

elif device.value == "cuda":

|

||||

# CUDA uses the default PyPi index

|

||||

optional_modules = "[xformers,onnx-cuda]"

|

||||

elif OS == "Windows":

|

||||

if device == GpuType.CUDA:

|

||||

url = "https://download.pytorch.org/whl/cu124"

|

||||

optional_modules = "[onnx-cuda]"

|

||||

elif device == GpuType.CUDA_WITH_XFORMERS:

|

||||

url = "https://download.pytorch.org/whl/cu124"

|

||||

if device.value == "cuda":

|

||||

url = "https://download.pytorch.org/whl/cu121"

|

||||

optional_modules = "[xformers,onnx-cuda]"

|

||||

elif device.value == "cpu":

|

||||

# CPU uses the default PyPi index, no optional modules

|

||||

|

||||

@@ -206,7 +206,6 @@ def dest_path(dest: Optional[str | Path] = None) -> Path | None:

|

||||

|

||||

|

||||

class GpuType(Enum):

|

||||

CUDA_WITH_XFORMERS = "xformers"

|

||||

CUDA = "cuda"

|

||||

ROCM = "rocm"

|

||||

CPU = "cpu"

|

||||

@@ -222,15 +221,11 @@ def select_gpu() -> GpuType:

|

||||

return GpuType.CPU

|

||||

|

||||

nvidia = (

|

||||

"an [gold1 b]NVIDIA[/] RTX 3060 or newer GPU using CUDA",

|

||||

"an [gold1 b]NVIDIA[/] GPU (using CUDA™)",

|

||||

GpuType.CUDA,

|

||||

)

|

||||

vintage_nvidia = (

|

||||

"an [gold1 b]NVIDIA[/] RTX 20xx or older GPU using CUDA+xFormers",

|

||||

GpuType.CUDA_WITH_XFORMERS,

|

||||

)

|

||||

amd = (

|

||||

"an [gold1 b]AMD[/] GPU using ROCm",

|

||||

"an [gold1 b]AMD[/] GPU (using ROCm™)",

|

||||

GpuType.ROCM,

|

||||

)

|

||||

cpu = (

|

||||

@@ -240,13 +235,14 @@ def select_gpu() -> GpuType:

|

||||

|

||||

options = []

|

||||

if OS == "Windows":

|

||||

options = [nvidia, vintage_nvidia, cpu]

|

||||

options = [nvidia, cpu]

|

||||

if OS == "Linux":

|

||||

options = [nvidia, vintage_nvidia, amd, cpu]

|

||||

options = [nvidia, amd, cpu]

|

||||

elif OS == "Darwin":

|

||||

options = [cpu]

|

||||

|

||||

if len(options) == 1:

|

||||

print(f'Your platform [gold1]{OS}-{ARCH}[/] only supports the "{options[0][1]}" driver. Proceeding with that.')

|

||||

return options[0][1]

|

||||

|

||||

options = {str(i): opt for i, opt in enumerate(options, 1)}

|

||||

@@ -259,7 +255,7 @@ def select_gpu() -> GpuType:

|

||||

[

|

||||

f"Detected the [gold1]{OS}-{ARCH}[/] platform",

|

||||

"",

|

||||

"See [deep_sky_blue1]https://invoke-ai.github.io/InvokeAI/installation/requirements/[/] to ensure your system meets the minimum requirements.",

|

||||

"See [deep_sky_blue1]https://invoke-ai.github.io/InvokeAI/#system[/] to ensure your system meets the minimum requirements.",

|

||||

"",

|

||||

"[red3]🠶[/] [b]Your GPU drivers must be correctly installed before using InvokeAI![/] [red3]🠴[/]",

|

||||

]

|

||||

|

||||

@@ -68,7 +68,7 @@ do_line_input() {

|

||||

printf "2: Open the developer console\n"

|

||||

printf "3: Command-line help\n"

|

||||

printf "Q: Quit\n\n"

|

||||

printf "To update, download and run the installer from https://github.com/invoke-ai/InvokeAI/releases/latest\n\n"

|

||||

printf "To update, download and run the installer from https://github.com/invoke-ai/InvokeAI/releases/latest.\n\n"

|

||||

read -p "Please enter 1-4, Q: [1] " yn

|

||||

choice=${yn:='1'}

|

||||

do_choice $choice

|

||||

|

||||

@@ -5,10 +5,9 @@ from fastapi.routing import APIRouter

|

||||

from pydantic import BaseModel, Field

|

||||

|

||||

from invokeai.app.api.dependencies import ApiDependencies

|

||||

from invokeai.app.services.board_records.board_records_common import BoardChanges, BoardRecordOrderBy

|

||||

from invokeai.app.services.board_records.board_records_common import BoardChanges

|

||||

from invokeai.app.services.boards.boards_common import BoardDTO

|

||||

from invokeai.app.services.shared.pagination import OffsetPaginatedResults

|

||||

from invokeai.app.services.shared.sqlite.sqlite_common import SQLiteDirection

|

||||

|

||||

boards_router = APIRouter(prefix="/v1/boards", tags=["boards"])

|

||||

|

||||

@@ -116,8 +115,6 @@ async def delete_board(

|

||||

response_model=Union[OffsetPaginatedResults[BoardDTO], list[BoardDTO]],

|

||||

)

|

||||

async def list_boards(

|

||||

order_by: BoardRecordOrderBy = Query(default=BoardRecordOrderBy.CreatedAt, description="The attribute to order by"),

|

||||

direction: SQLiteDirection = Query(default=SQLiteDirection.Descending, description="The direction to order by"),

|

||||

all: Optional[bool] = Query(default=None, description="Whether to list all boards"),

|

||||

offset: Optional[int] = Query(default=None, description="The page offset"),

|

||||

limit: Optional[int] = Query(default=None, description="The number of boards per page"),

|

||||

@@ -125,9 +122,9 @@ async def list_boards(

|

||||

) -> Union[OffsetPaginatedResults[BoardDTO], list[BoardDTO]]:

|

||||

"""Gets a list of boards"""

|

||||

if all:

|

||||

return ApiDependencies.invoker.services.boards.get_all(order_by, direction, include_archived)

|

||||

return ApiDependencies.invoker.services.boards.get_all(include_archived)

|

||||

elif offset is not None and limit is not None:

|

||||

return ApiDependencies.invoker.services.boards.get_many(order_by, direction, offset, limit, include_archived)

|

||||

return ApiDependencies.invoker.services.boards.get_many(offset, limit, include_archived)

|

||||

else:

|

||||

raise HTTPException(

|

||||

status_code=400,

|

||||

|

||||

@@ -1,7 +1,6 @@

|

||||

# Copyright (c) 2023 Lincoln D. Stein

|

||||

"""FastAPI route for model configuration records."""

|

||||

|

||||

import contextlib

|

||||

import io

|

||||

import pathlib

|

||||

import shutil

|

||||

@@ -11,7 +10,6 @@ from enum import Enum

|

||||

from tempfile import TemporaryDirectory

|

||||

from typing import List, Optional, Type

|

||||

|

||||

import huggingface_hub

|

||||

from fastapi import Body, Path, Query, Response, UploadFile

|

||||

from fastapi.responses import FileResponse, HTMLResponse

|

||||

from fastapi.routing import APIRouter

|

||||

@@ -29,7 +27,6 @@ from invokeai.app.services.model_records import (

|

||||

ModelRecordChanges,

|

||||

UnknownModelException,

|

||||

)

|

||||

from invokeai.app.util.suppress_output import SuppressOutput

|

||||

from invokeai.backend.model_manager.config import (

|

||||

AnyModelConfig,

|

||||

BaseModelType,

|

||||

@@ -41,12 +38,7 @@ from invokeai.backend.model_manager.load.model_cache.model_cache_base import Cac

|

||||

from invokeai.backend.model_manager.metadata.fetch.huggingface import HuggingFaceMetadataFetch

|

||||

from invokeai.backend.model_manager.metadata.metadata_base import ModelMetadataWithFiles, UnknownMetadataException

|

||||

from invokeai.backend.model_manager.search import ModelSearch

|

||||

from invokeai.backend.model_manager.starter_models import (

|

||||

STARTER_BUNDLES,

|

||||

STARTER_MODELS,

|

||||

StarterModel,

|

||||

StarterModelWithoutDependencies,

|

||||

)

|

||||

from invokeai.backend.model_manager.starter_models import STARTER_MODELS, StarterModel, StarterModelWithoutDependencies

|

||||

|

||||

model_manager_router = APIRouter(prefix="/v2/models", tags=["model_manager"])

|

||||

|

||||

@@ -800,52 +792,22 @@ async def convert_model(

|

||||

return new_config

|

||||

|

||||

|

||||

class StarterModelResponse(BaseModel):

|

||||

starter_models: list[StarterModel]

|

||||

starter_bundles: dict[str, list[StarterModel]]

|

||||

|

||||

|

||||

def get_is_installed(

|

||||

starter_model: StarterModel | StarterModelWithoutDependencies, installed_models: list[AnyModelConfig]

|

||||

) -> bool:

|

||||

for model in installed_models:

|

||||

if model.source == starter_model.source:

|

||||

return True

|

||||

if (

|

||||

(model.name == starter_model.name or model.name in starter_model.previous_names)

|

||||

and model.base == starter_model.base

|

||||

and model.type == starter_model.type

|

||||

):

|

||||

return True

|

||||

return False

|

||||

|

||||

|

||||

@model_manager_router.get("/starter_models", operation_id="get_starter_models", response_model=StarterModelResponse)

|

||||

async def get_starter_models() -> StarterModelResponse:

|

||||

@model_manager_router.get("/starter_models", operation_id="get_starter_models", response_model=list[StarterModel])

|

||||

async def get_starter_models() -> list[StarterModel]:

|

||||

installed_models = ApiDependencies.invoker.services.model_manager.store.search_by_attr()

|

||||

installed_model_sources = {m.source for m in installed_models}

|

||||

starter_models = deepcopy(STARTER_MODELS)

|

||||

starter_bundles = deepcopy(STARTER_BUNDLES)

|

||||

for model in starter_models:

|

||||

model.is_installed = get_is_installed(model, installed_models)

|

||||

if model.source in installed_model_sources:

|

||||

model.is_installed = True

|

||||

# Remove already-installed dependencies

|

||||

missing_deps: list[StarterModelWithoutDependencies] = []

|

||||

|

||||

for dep in model.dependencies or []:

|

||||

if not get_is_installed(dep, installed_models):

|

||||

if dep.source not in installed_model_sources:

|

||||

missing_deps.append(dep)

|

||||

model.dependencies = missing_deps

|

||||

|

||||

for bundle in starter_bundles.values():

|

||||

for model in bundle:

|

||||

model.is_installed = get_is_installed(model, installed_models)

|

||||

# Remove already-installed dependencies

|

||||

missing_deps: list[StarterModelWithoutDependencies] = []

|

||||

for dep in model.dependencies or []:

|

||||

if not get_is_installed(dep, installed_models):

|

||||

missing_deps.append(dep)

|

||||

model.dependencies = missing_deps

|

||||

|

||||

return StarterModelResponse(starter_models=starter_models, starter_bundles=starter_bundles)

|

||||

return starter_models

|

||||

|

||||

|

||||

@model_manager_router.get(

|

||||

@@ -926,51 +888,3 @@ async def get_stats() -> Optional[CacheStats]:

|

||||

"""Return performance statistics on the model manager's RAM cache. Will return null if no models have been loaded."""

|

||||

|

||||

return ApiDependencies.invoker.services.model_manager.load.ram_cache.stats

|

||||

|

||||

|

||||

class HFTokenStatus(str, Enum):

|

||||

VALID = "valid"

|

||||

INVALID = "invalid"

|

||||

UNKNOWN = "unknown"

|

||||

|

||||

|

||||

class HFTokenHelper:

|

||||

@classmethod

|

||||

def get_status(cls) -> HFTokenStatus:

|

||||

try:

|

||||

if huggingface_hub.get_token_permission(huggingface_hub.get_token()):

|

||||

# Valid token!

|

||||

return HFTokenStatus.VALID

|

||||

# No token set

|

||||

return HFTokenStatus.INVALID

|

||||

except Exception:

|

||||

return HFTokenStatus.UNKNOWN

|

||||

|

||||

@classmethod

|

||||

def set_token(cls, token: str) -> HFTokenStatus:

|

||||

with SuppressOutput(), contextlib.suppress(Exception):

|

||||

huggingface_hub.login(token=token, add_to_git_credential=False)

|

||||

return cls.get_status()

|

||||

|

||||

|

||||

@model_manager_router.get("/hf_login", operation_id="get_hf_login_status", response_model=HFTokenStatus)

|

||||

async def get_hf_login_status() -> HFTokenStatus:

|

||||

token_status = HFTokenHelper.get_status()

|

||||

|

||||

if token_status is HFTokenStatus.UNKNOWN:

|

||||

ApiDependencies.invoker.services.logger.warning("Unable to verify HF token")

|

||||

|

||||

return token_status

|

||||

|

||||

|

||||

@model_manager_router.post("/hf_login", operation_id="do_hf_login", response_model=HFTokenStatus)

|

||||

async def do_hf_login(

|

||||

token: str = Body(description="Hugging Face token to use for login", embed=True),

|

||||

) -> HFTokenStatus:

|

||||

HFTokenHelper.set_token(token)

|

||||

token_status = HFTokenHelper.get_status()

|

||||

|

||||

if token_status is HFTokenStatus.UNKNOWN:

|

||||

ApiDependencies.invoker.services.logger.warning("Unable to verify HF token")

|

||||

|

||||

return token_status

|

||||

|

||||

@@ -83,7 +83,7 @@ async def create_workflow(

|

||||

)

|

||||

async def list_workflows(

|

||||

page: int = Query(default=0, description="The page to get"),

|

||||

per_page: Optional[int] = Query(default=None, description="The number of workflows per page"),

|

||||

per_page: int = Query(default=10, description="The number of workflows per page"),

|

||||

order_by: WorkflowRecordOrderBy = Query(

|

||||

default=WorkflowRecordOrderBy.Name, description="The attribute to order by"

|

||||

),

|

||||

@@ -93,5 +93,5 @@ async def list_workflows(

|

||||

) -> PaginatedResults[WorkflowRecordListItemDTO]:

|

||||

"""Gets a page of workflows"""

|

||||

return ApiDependencies.invoker.services.workflow_records.get_many(

|

||||

order_by=order_by, direction=direction, page=page, per_page=per_page, query=query, category=category

|

||||

page=page, per_page=per_page, order_by=order_by, direction=direction, query=query, category=category

|

||||

)

|

||||

|

||||

@@ -7,14 +7,13 @@ from pathlib import Path

|

||||

|

||||

import torch

|

||||

import uvicorn

|

||||

from fastapi import FastAPI, Request

|

||||

from fastapi import FastAPI

|

||||

from fastapi.middleware.cors import CORSMiddleware

|

||||

from fastapi.middleware.gzip import GZipMiddleware

|

||||

from fastapi.openapi.docs import get_redoc_html, get_swagger_ui_html

|

||||

from fastapi.responses import HTMLResponse, RedirectResponse

|

||||

from fastapi.responses import HTMLResponse

|

||||

from fastapi_events.handlers.local import local_handler

|

||||

from fastapi_events.middleware import EventHandlerASGIMiddleware

|

||||

from starlette.middleware.base import BaseHTTPMiddleware, RequestResponseEndpoint

|

||||

from torch.backends.mps import is_available as is_mps_available

|

||||

|

||||

# for PyCharm:

|

||||

@@ -79,29 +78,6 @@ app = FastAPI(

|

||||

lifespan=lifespan,

|

||||

)

|

||||

|

||||

|

||||

class RedirectRootWithQueryStringMiddleware(BaseHTTPMiddleware):

|

||||

"""When a request is made to the root path with a query string, redirect to the root path without the query string.

|

||||

|

||||

For example, to force a Gradio app to use dark mode, users may append `?__theme=dark` to the URL. Their browser may

|

||||

have this query string saved in history or a bookmark, so when the user navigates to `http://127.0.0.1:9090/`, the

|

||||

browser takes them to `http://127.0.0.1:9090/?__theme=dark`.

|

||||

|

||||

This breaks the static file serving in the UI, so we redirect the user to the root path without the query string.

|

||||

"""

|

||||

|

||||

async def dispatch(self, request: Request, call_next: RequestResponseEndpoint):

|

||||

if request.url.path == "/" and request.url.query:

|

||||

return RedirectResponse(url="/")

|

||||

|

||||

response = await call_next(request)

|

||||

return response

|

||||

|

||||

|

||||

# Add the middleware

|

||||

app.add_middleware(RedirectRootWithQueryStringMiddleware)

|

||||

|

||||

|

||||

# Add event handler

|

||||

event_handler_id: int = id(app)

|

||||

app.add_middleware(

|

||||

|

||||

@@ -4,7 +4,6 @@ from __future__ import annotations

|

||||

|

||||

import inspect

|

||||

import re

|

||||

import sys

|

||||

import warnings

|

||||

from abc import ABC, abstractmethod

|

||||

from enum import Enum

|

||||

@@ -193,19 +192,12 @@ class BaseInvocation(ABC, BaseModel):

|

||||

"""Gets a pydantc TypeAdapter for the union of all invocation types."""

|

||||

if not cls._typeadapter or cls._typeadapter_needs_update:

|

||||

AnyInvocation = TypeAliasType(

|

||||

"AnyInvocation", Annotated[Union[tuple(cls.get_invocations())], Field(discriminator="type")]

|

||||

"AnyInvocation", Annotated[Union[tuple(cls._invocation_classes)], Field(discriminator="type")]

|

||||

)

|

||||

cls._typeadapter = TypeAdapter(AnyInvocation)

|

||||

cls._typeadapter_needs_update = False

|

||||

return cls._typeadapter

|

||||

|

||||

@classmethod

|

||||

def invalidate_typeadapter(cls) -> None:

|

||||

"""Invalidates the typeadapter, forcing it to be rebuilt on next access. If the invocation allowlist or

|

||||

denylist is changed, this should be called to ensure the typeadapter is updated and validation respects

|

||||

the updated allowlist and denylist."""

|

||||

cls._typeadapter_needs_update = True

|

||||

|

||||

@classmethod

|

||||

def get_invocations(cls) -> Iterable[BaseInvocation]:

|

||||

"""Gets all invocations, respecting the allowlist and denylist."""

|

||||

@@ -487,26 +479,6 @@ def invocation(

|

||||

title="type", default=invocation_type, json_schema_extra={"field_kind": FieldKind.NodeAttribute}

|

||||

)

|

||||

|

||||

# Validate the `invoke()` method is implemented

|

||||

if "invoke" in cls.__abstractmethods__:

|

||||

raise ValueError(f'Invocation "{invocation_type}" must implement the "invoke" method')

|

||||

|

||||

# And validate that `invoke()` returns a subclass of `BaseInvocationOutput

|

||||

invoke_return_annotation = signature(cls.invoke).return_annotation

|

||||

|

||||

try:

|

||||

# TODO(psyche): If `invoke()` is not defined, `return_annotation` ends up as the string "BaseInvocationOutput"

|

||||

# instead of the class `BaseInvocationOutput`. This may be a pydantic bug: https://github.com/pydantic/pydantic/issues/7978

|

||||

if isinstance(invoke_return_annotation, str):

|

||||

invoke_return_annotation = getattr(sys.modules[cls.__module__], invoke_return_annotation)

|

||||

|

||||

assert invoke_return_annotation is not BaseInvocationOutput

|

||||

assert issubclass(invoke_return_annotation, BaseInvocationOutput)

|

||||

except Exception:

|

||||

raise ValueError(

|

||||

f'Invocation "{invocation_type}" must have a return annotation of a subclass of BaseInvocationOutput (got "{invoke_return_annotation}")'

|

||||

)

|

||||

|

||||

docstring = cls.__doc__

|

||||

cls = create_model(

|

||||

cls.__qualname__,

|

||||

|

||||

@@ -13,7 +13,6 @@ from diffusers.models.unets.unet_2d_condition import UNet2DConditionModel

|

||||

from diffusers.schedulers.scheduling_dpmsolver_sde import DPMSolverSDEScheduler

|

||||

from diffusers.schedulers.scheduling_tcd import TCDScheduler

|

||||

from diffusers.schedulers.scheduling_utils import SchedulerMixin as Scheduler

|

||||

from PIL import Image

|

||||

from pydantic import field_validator

|

||||

from torchvision.transforms.functional import resize as tv_resize

|

||||

from transformers import CLIPVisionModelWithProjection

|

||||

@@ -511,7 +510,6 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

context: InvocationContext,

|

||||

t2i_adapters: Optional[Union[T2IAdapterField, list[T2IAdapterField]]],

|

||||

ext_manager: ExtensionsManager,

|

||||

bgr_mode: bool = False,

|

||||

) -> None:

|

||||

if t2i_adapters is None:

|

||||

return

|

||||

@@ -521,10 +519,6 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

t2i_adapters = [t2i_adapters]

|

||||

|

||||

for t2i_adapter_field in t2i_adapters:

|

||||

image = context.images.get_pil(t2i_adapter_field.image.image_name)

|

||||

if bgr_mode: # SDXL t2i trained on cv2's BGR outputs, but PIL won't convert straight to BGR

|

||||

r, g, b = image.split()

|

||||

image = Image.merge("RGB", (b, g, r))

|

||||

ext_manager.add_extension(

|

||||

T2IAdapterExt(

|

||||

node_context=context,

|

||||

@@ -553,9 +547,7 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

if not isinstance(single_ipa_image_fields, list):

|

||||

single_ipa_image_fields = [single_ipa_image_fields]

|

||||

|

||||

single_ipa_images = [

|

||||

context.images.get_pil(image.image_name, mode="RGB") for image in single_ipa_image_fields

|

||||

]

|

||||

single_ipa_images = [context.images.get_pil(image.image_name) for image in single_ipa_image_fields]

|

||||

with image_encoder_model_info as image_encoder_model:

|

||||

assert isinstance(image_encoder_model, CLIPVisionModelWithProjection)

|

||||

# Get image embeddings from CLIP and ImageProjModel.

|

||||

@@ -622,17 +614,13 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

for t2i_adapter_field in t2i_adapter:

|

||||

t2i_adapter_model_config = context.models.get_config(t2i_adapter_field.t2i_adapter_model.key)

|

||||

t2i_adapter_loaded_model = context.models.load(t2i_adapter_field.t2i_adapter_model)

|

||||

image = context.images.get_pil(t2i_adapter_field.image.image_name, mode="RGB")

|

||||

image = context.images.get_pil(t2i_adapter_field.image.image_name)

|

||||

|

||||

# The max_unet_downscale is the maximum amount that the UNet model downscales the latent image internally.

|

||||

if t2i_adapter_model_config.base == BaseModelType.StableDiffusion1:

|

||||

max_unet_downscale = 8

|

||||

elif t2i_adapter_model_config.base == BaseModelType.StableDiffusionXL:

|

||||

max_unet_downscale = 4

|

||||

|

||||

# SDXL adapters are trained on cv2's BGR outputs

|

||||

r, g, b = image.split()

|

||||

image = Image.merge("RGB", (b, g, r))

|

||||

else:

|

||||

raise ValueError(f"Unexpected T2I-Adapter base model type: '{t2i_adapter_model_config.base}'.")

|

||||

|

||||

@@ -640,39 +628,29 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

with t2i_adapter_loaded_model as t2i_adapter_model:

|

||||

total_downscale_factor = t2i_adapter_model.total_downscale_factor

|

||||

|

||||

# Resize the T2I-Adapter input image.

|

||||

# We select the resize dimensions so that after the T2I-Adapter's total_downscale_factor is applied, the

|

||||

# result will match the latent image's dimensions after max_unet_downscale is applied.

|

||||

t2i_input_height = latents_shape[2] // max_unet_downscale * total_downscale_factor

|

||||

t2i_input_width = latents_shape[3] // max_unet_downscale * total_downscale_factor

|

||||

|

||||

# Note: We have hard-coded `do_classifier_free_guidance=False`. This is because we only want to prepare

|

||||

# a single image. If CFG is enabled, we will duplicate the resultant tensor after applying the

|

||||

# T2I-Adapter model.

|

||||

#

|

||||

# Note: We re-use the `prepare_control_image(...)` from ControlNet for T2I-Adapter, because it has many

|

||||

# of the same requirements (e.g. preserving binary masks during resize).

|

||||

|

||||

# Assuming fixed dimensional scaling of LATENT_SCALE_FACTOR.

|

||||

_, _, latent_height, latent_width = latents_shape

|

||||

control_height_resize = latent_height * LATENT_SCALE_FACTOR

|

||||

control_width_resize = latent_width * LATENT_SCALE_FACTOR

|

||||

t2i_image = prepare_control_image(

|

||||

image=image,

|

||||

do_classifier_free_guidance=False,

|

||||

width=control_width_resize,

|

||||

height=control_height_resize,

|

||||

width=t2i_input_width,

|

||||

height=t2i_input_height,

|

||||

num_channels=t2i_adapter_model.config["in_channels"], # mypy treats this as a FrozenDict

|

||||

device=t2i_adapter_model.device,

|

||||

dtype=t2i_adapter_model.dtype,

|

||||

resize_mode=t2i_adapter_field.resize_mode,

|

||||

)

|

||||

|

||||

# Resize the T2I-Adapter input image.

|

||||

# We select the resize dimensions so that after the T2I-Adapter's total_downscale_factor is applied, the

|

||||

# result will match the latent image's dimensions after max_unet_downscale is applied.

|

||||

# We crop the image to this size so that the positions match the input image on non-standard resolutions

|

||||

t2i_input_height = latents_shape[2] // max_unet_downscale * total_downscale_factor

|

||||

t2i_input_width = latents_shape[3] // max_unet_downscale * total_downscale_factor

|

||||

if t2i_image.shape[2] > t2i_input_height or t2i_image.shape[3] > t2i_input_width:

|

||||

t2i_image = t2i_image[

|

||||

:, :, : min(t2i_image.shape[2], t2i_input_height), : min(t2i_image.shape[3], t2i_input_width)

|

||||

]

|

||||

|

||||

adapter_state = t2i_adapter_model(t2i_image)

|

||||

|

||||

if do_classifier_free_guidance:

|

||||

@@ -920,8 +898,7 @@ class DenoiseLatentsInvocation(BaseInvocation):

|

||||

# ext = extension_field.to_extension(exit_stack, context, ext_manager)

|

||||

# ext_manager.add_extension(ext)

|

||||

self.parse_controlnet_field(exit_stack, context, self.control, ext_manager)

|

||||

bgr_mode = self.unet.unet.base == BaseModelType.StableDiffusionXL

|

||||

self.parse_t2i_adapter_field(exit_stack, context, self.t2i_adapter, ext_manager, bgr_mode)

|

||||

self.parse_t2i_adapter_field(exit_stack, context, self.t2i_adapter, ext_manager)

|

||||

|

||||

# ext: t2i/ip adapter

|

||||

ext_manager.run_callback(ExtensionCallbackType.SETUP, denoise_ctx)

|

||||

|

||||

@@ -41,7 +41,6 @@ class UIType(str, Enum, metaclass=MetaEnum):

|

||||

# region Model Field Types

|

||||

MainModel = "MainModelField"

|

||||

FluxMainModel = "FluxMainModelField"

|

||||

SD3MainModel = "SD3MainModelField"

|

||||

SDXLMainModel = "SDXLMainModelField"

|

||||

SDXLRefinerModel = "SDXLRefinerModelField"

|

||||

ONNXModel = "ONNXModelField"

|

||||

@@ -53,8 +52,6 @@ class UIType(str, Enum, metaclass=MetaEnum):

|

||||

T2IAdapterModel = "T2IAdapterModelField"

|

||||

T5EncoderModel = "T5EncoderModelField"

|

||||

CLIPEmbedModel = "CLIPEmbedModelField"

|

||||

CLIPLEmbedModel = "CLIPLEmbedModelField"

|

||||

CLIPGEmbedModel = "CLIPGEmbedModelField"

|

||||

SpandrelImageToImageModel = "SpandrelImageToImageModelField"

|

||||

# endregion

|

||||

|

||||

@@ -134,10 +131,8 @@ class FieldDescriptions:

|

||||

clip = "CLIP (tokenizer, text encoder, LoRAs) and skipped layer count"

|

||||

t5_encoder = "T5 tokenizer and text encoder"

|

||||

clip_embed_model = "CLIP Embed loader"

|

||||

clip_g_model = "CLIP-G Embed loader"

|

||||

unet = "UNet (scheduler, LoRAs)"

|

||||

transformer = "Transformer"

|

||||

mmditx = "MMDiTX"

|

||||

vae = "VAE"

|

||||

cond = "Conditioning tensor"

|

||||

controlnet_model = "ControlNet model to load"

|

||||

@@ -145,7 +140,6 @@ class FieldDescriptions:

|

||||

lora_model = "LoRA model to load"

|

||||

main_model = "Main model (UNet, VAE, CLIP) to load"

|

||||

flux_model = "Flux model (Transformer) to load"

|

||||

sd3_model = "SD3 model (MMDiTX) to load"

|

||||

sdxl_main_model = "SDXL Main model (UNet, VAE, CLIP1, CLIP2) to load"

|

||||

sdxl_refiner_model = "SDXL Refiner Main Modde (UNet, VAE, CLIP2) to load"

|

||||

onnx_main_model = "ONNX Main model (UNet, VAE, CLIP) to load"

|

||||

@@ -198,7 +192,6 @@ class FieldDescriptions:

|

||||

freeu_s2 = 'Scaling factor for stage 2 to attenuate the contributions of the skip features. This is done to mitigate the "oversmoothing effect" in the enhanced denoising process.'

|

||||

freeu_b1 = "Scaling factor for stage 1 to amplify the contributions of backbone features."

|

||||

freeu_b2 = "Scaling factor for stage 2 to amplify the contributions of backbone features."

|

||||

instantx_control_mode = "The control mode for InstantX ControlNet union models. Ignored for other ControlNet models. The standard mapping is: canny (0), tile (1), depth (2), blur (3), pose (4), gray (5), low quality (6). Negative values will be treated as 'None'."

|

||||

|

||||

|

||||

class ImageField(BaseModel):

|

||||

@@ -252,12 +245,6 @@ class FluxConditioningField(BaseModel):

|

||||

conditioning_name: str = Field(description="The name of conditioning tensor")

|

||||

|

||||

|

||||

class SD3ConditioningField(BaseModel):

|

||||

"""A conditioning tensor primitive value"""

|

||||

|

||||

conditioning_name: str = Field(description="The name of conditioning tensor")

|

||||

|

||||

|

||||

class ConditioningField(BaseModel):

|

||||

"""A conditioning tensor primitive value"""

|

||||

|

||||

|

||||

@@ -1,99 +0,0 @@

|

||||

from pydantic import BaseModel, Field, field_validator, model_validator

|

||||

|

||||

from invokeai.app.invocations.baseinvocation import (

|

||||

BaseInvocation,

|

||||

BaseInvocationOutput,

|

||||

Classification,

|

||||

invocation,

|

||||

invocation_output,

|

||||

)

|

||||

from invokeai.app.invocations.fields import FieldDescriptions, ImageField, InputField, OutputField, UIType

|

||||

from invokeai.app.invocations.model import ModelIdentifierField

|

||||

from invokeai.app.invocations.util import validate_begin_end_step, validate_weights

|

||||

from invokeai.app.services.shared.invocation_context import InvocationContext

|

||||

from invokeai.app.util.controlnet_utils import CONTROLNET_RESIZE_VALUES

|

||||

|

||||

|

||||

class FluxControlNetField(BaseModel):

|

||||

image: ImageField = Field(description="The control image")

|

||||

control_model: ModelIdentifierField = Field(description="The ControlNet model to use")

|

||||

control_weight: float | list[float] = Field(default=1, description="The weight given to the ControlNet")

|

||||

begin_step_percent: float = Field(

|

||||

default=0, ge=0, le=1, description="When the ControlNet is first applied (% of total steps)"

|

||||

)

|

||||

end_step_percent: float = Field(

|

||||

default=1, ge=0, le=1, description="When the ControlNet is last applied (% of total steps)"

|

||||

)

|

||||

resize_mode: CONTROLNET_RESIZE_VALUES = Field(default="just_resize", description="The resize mode to use")

|

||||

instantx_control_mode: int | None = Field(default=-1, description=FieldDescriptions.instantx_control_mode)

|

||||

|

||||

@field_validator("control_weight")

|

||||

@classmethod

|

||||

def validate_control_weight(cls, v: float | list[float]) -> float | list[float]:

|

||||

validate_weights(v)

|

||||

return v

|

||||

|

||||

@model_validator(mode="after")

|

||||

def validate_begin_end_step_percent(self):

|

||||

validate_begin_end_step(self.begin_step_percent, self.end_step_percent)

|

||||

return self

|

||||

|

||||

|

||||

@invocation_output("flux_controlnet_output")

|

||||

class FluxControlNetOutput(BaseInvocationOutput):

|

||||

"""FLUX ControlNet info"""

|

||||

|

||||

control: FluxControlNetField = OutputField(description=FieldDescriptions.control)

|

||||

|

||||

|

||||

@invocation(

|

||||

"flux_controlnet",

|

||||

title="FLUX ControlNet",

|

||||

tags=["controlnet", "flux"],

|

||||

category="controlnet",

|

||||

version="1.0.0",

|

||||

classification=Classification.Prototype,

|

||||

)

|

||||

class FluxControlNetInvocation(BaseInvocation):

|

||||

"""Collect FLUX ControlNet info to pass to other nodes."""

|

||||

|

||||

image: ImageField = InputField(description="The control image")

|

||||

control_model: ModelIdentifierField = InputField(

|

||||

description=FieldDescriptions.controlnet_model, ui_type=UIType.ControlNetModel

|

||||

)

|

||||

control_weight: float | list[float] = InputField(

|

||||

default=1.0, ge=-1, le=2, description="The weight given to the ControlNet"

|

||||

)

|

||||

begin_step_percent: float = InputField(

|

||||

default=0, ge=0, le=1, description="When the ControlNet is first applied (% of total steps)"

|

||||

)

|

||||

end_step_percent: float = InputField(

|

||||

default=1, ge=0, le=1, description="When the ControlNet is last applied (% of total steps)"

|

||||

)

|

||||

resize_mode: CONTROLNET_RESIZE_VALUES = InputField(default="just_resize", description="The resize mode used")

|

||||

# Note: We default to -1 instead of None, because in the workflow editor UI None is not currently supported.

|

||||

instantx_control_mode: int | None = InputField(default=-1, description=FieldDescriptions.instantx_control_mode)

|

||||

|

||||

@field_validator("control_weight")

|

||||

@classmethod

|

||||

def validate_control_weight(cls, v: float | list[float]) -> float | list[float]:

|

||||

validate_weights(v)

|

||||

return v

|

||||

|

||||

@model_validator(mode="after")

|

||||

def validate_begin_end_step_percent(self):

|

||||

validate_begin_end_step(self.begin_step_percent, self.end_step_percent)

|

||||

return self

|

||||

|

||||

def invoke(self, context: InvocationContext) -> FluxControlNetOutput:

|

||||

return FluxControlNetOutput(

|

||||

control=FluxControlNetField(

|

||||

image=self.image,

|

||||

control_model=self.control_model,

|

||||

control_weight=self.control_weight,

|

||||

begin_step_percent=self.begin_step_percent,

|

||||

end_step_percent=self.end_step_percent,

|

||||

resize_mode=self.resize_mode,

|

||||

instantx_control_mode=self.instantx_control_mode,

|

||||

),

|

||||

)

|

||||

@@ -1,38 +1,26 @@

|

||||

from contextlib import ExitStack

|

||||

from typing import Callable, Iterator, Optional, Tuple

|

||||

|

||||

import numpy as np

|

||||

import numpy.typing as npt

|

||||

import torch

|

||||

import torchvision.transforms as tv_transforms

|

||||

from torchvision.transforms.functional import resize as tv_resize

|

||||

from transformers import CLIPImageProcessor, CLIPVisionModelWithProjection

|

||||

|

||||

from invokeai.app.invocations.baseinvocation import BaseInvocation, Classification, invocation

|

||||

from invokeai.app.invocations.fields import (

|

||||

DenoiseMaskField,

|

||||

FieldDescriptions,

|

||||

FluxConditioningField,

|

||||

ImageField,

|

||||

Input,

|

||||

InputField,

|

||||

LatentsField,

|

||||

WithBoard,

|

||||

WithMetadata,

|

||||

)

|

||||

from invokeai.app.invocations.flux_controlnet import FluxControlNetField

|

||||

from invokeai.app.invocations.ip_adapter import IPAdapterField

|

||||

from invokeai.app.invocations.model import TransformerField, VAEField

|

||||

from invokeai.app.invocations.model import TransformerField

|

||||

from invokeai.app.invocations.primitives import LatentsOutput

|

||||

from invokeai.app.services.shared.invocation_context import InvocationContext

|

||||

from invokeai.backend.flux.controlnet.instantx_controlnet_flux import InstantXControlNetFlux

|

||||

from invokeai.backend.flux.controlnet.xlabs_controlnet_flux import XLabsControlNetFlux

|

||||

from invokeai.backend.flux.denoise import denoise

|

||||

from invokeai.backend.flux.extensions.inpaint_extension import InpaintExtension

|

||||

from invokeai.backend.flux.extensions.instantx_controlnet_extension import InstantXControlNetExtension

|

||||

from invokeai.backend.flux.extensions.xlabs_controlnet_extension import XLabsControlNetExtension

|

||||

from invokeai.backend.flux.extensions.xlabs_ip_adapter_extension import XLabsIPAdapterExtension

|

||||

from invokeai.backend.flux.ip_adapter.xlabs_ip_adapter_flux import XlabsIpAdapterFlux

|

||||

from invokeai.backend.flux.inpaint_extension import InpaintExtension

|

||||

from invokeai.backend.flux.model import Flux

|

||||

from invokeai.backend.flux.sampling_utils import (

|

||||

clip_timestep_schedule_fractional,

|

||||

@@ -42,7 +30,7 @@ from invokeai.backend.flux.sampling_utils import (

|

||||

pack,

|

||||

unpack,

|

||||

)

|

||||

from invokeai.backend.lora.conversions.flux_lora_constants import FLUX_LORA_TRANSFORMER_PREFIX

|

||||

from invokeai.backend.lora.conversions.flux_kohya_lora_conversion_utils import FLUX_KOHYA_TRANFORMER_PREFIX

|

||||

from invokeai.backend.lora.lora_model_raw import LoRAModelRaw

|

||||

from invokeai.backend.lora.lora_patcher import LoRAPatcher

|

||||

from invokeai.backend.model_manager.config import ModelFormat

|

||||

@@ -56,7 +44,7 @@ from invokeai.backend.util.devices import TorchDevice

|

||||

title="FLUX Denoise",

|

||||

tags=["image", "flux"],

|

||||

category="image",

|

||||

version="3.2.0",

|

||||

version="3.0.0",

|

||||

classification=Classification.Prototype,

|

||||

)

|

||||

class FluxDenoiseInvocation(BaseInvocation, WithMetadata, WithBoard):

|

||||

@@ -89,24 +77,6 @@ class FluxDenoiseInvocation(BaseInvocation, WithMetadata, WithBoard):

|

||||

positive_text_conditioning: FluxConditioningField = InputField(

|

||||

description=FieldDescriptions.positive_cond, input=Input.Connection

|

||||

)

|

||||

negative_text_conditioning: FluxConditioningField | None = InputField(

|

||||

default=None,

|

||||

description="Negative conditioning tensor. Can be None if cfg_scale is 1.0.",

|

||||

input=Input.Connection,

|

||||

)

|

||||

cfg_scale: float | list[float] = InputField(default=1.0, description=FieldDescriptions.cfg_scale, title="CFG Scale")

|

||||

cfg_scale_start_step: int = InputField(

|

||||

default=0,

|

||||

title="CFG Scale Start Step",

|

||||

description="Index of the first step to apply cfg_scale. Negative indices count backwards from the "

|

||||

+ "the last step (e.g. a value of -1 refers to the final step).",

|

||||

)

|

||||

cfg_scale_end_step: int = InputField(

|

||||

default=-1,

|

||||

title="CFG Scale End Step",

|

||||

description="Index of the last step to apply cfg_scale. Negative indices count backwards from the "

|

||||

+ "last step (e.g. a value of -1 refers to the final step).",

|

||||

)

|

||||

width: int = InputField(default=1024, multiple_of=16, description="Width of the generated image.")

|

||||

height: int = InputField(default=1024, multiple_of=16, description="Height of the generated image.")

|

||||

num_steps: int = InputField(

|

||||

@@ -117,18 +87,6 @@ class FluxDenoiseInvocation(BaseInvocation, WithMetadata, WithBoard):

|

||||

description="The guidance strength. Higher values adhere more strictly to the prompt, and will produce less diverse images. FLUX dev only, ignored for schnell.",

|

||||

)

|

||||

seed: int = InputField(default=0, description="Randomness seed for reproducibility.")

|

||||

control: FluxControlNetField | list[FluxControlNetField] | None = InputField(

|

||||

default=None, input=Input.Connection, description="ControlNet models."

|

||||

)

|

||||

controlnet_vae: VAEField | None = InputField(

|

||||

default=None,

|

||||

description=FieldDescriptions.vae,

|

||||

input=Input.Connection,

|

||||

)

|

||||

|

||||

ip_adapter: IPAdapterField | list[IPAdapterField] | None = InputField(

|

||||

description=FieldDescriptions.ip_adapter, title="IP-Adapter", default=None, input=Input.Connection

|

||||

)

|

||||

|

||||

@torch.no_grad()

|

||||

def invoke(self, context: InvocationContext) -> LatentsOutput:

|

||||

@@ -138,19 +96,6 @@ class FluxDenoiseInvocation(BaseInvocation, WithMetadata, WithBoard):

|

||||